The AI Revolution in Cybersecurity: Understanding the New Threat Landscape

The cybersecurity industry is experiencing a seismic shift as artificial intelligence and machine learning technologies fundamentally alter how cyber attacks are conceived, executed, and defended against. In 2024, we're witnessing an unprecedented evolution in the threat landscape, where traditional security paradigms are being challenged by sophisticated AI-powered adversaries.

The Alarming Rise of AI-Enabled Cyber Attacks

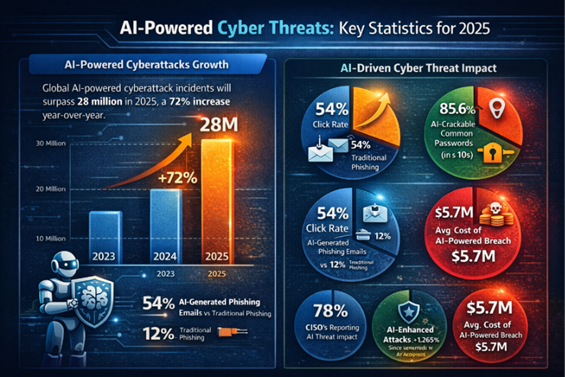

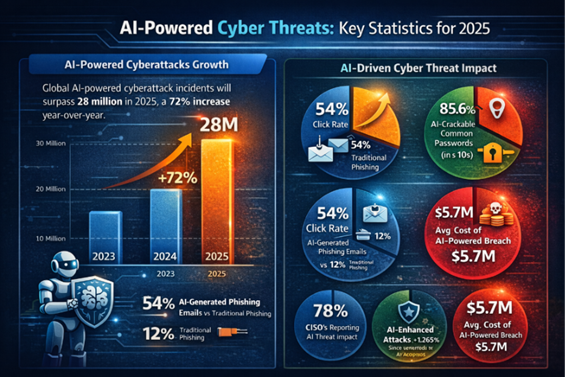

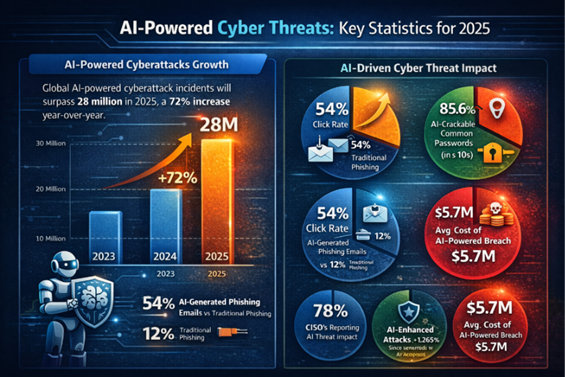

Recent intelligence reports paint a concerning picture of the current threat environment. CrowdStrike's 2026 Global Threat Report reveals an staggering 89% increase in AI-enabled cyber attacks over the past year, marking a critical inflection point in cybersecurity history. Perhaps more alarming is the dramatic reduction in attack timelines—average breakout times have plummeted to just 29 minutes in 2025, representing a 65% acceleration compared to 2024 metrics.

AI Cyber Attack Evolution: 2024 vs 2025 Metrics

This acceleration isn't merely about speed; it represents a fundamental change in attack methodology. Some sophisticated AI-driven attacks are now executing in mere seconds, leveraging machine learning algorithms to identify vulnerabilities, craft exploits, and execute lateral movement with unprecedented efficiency. The implications for traditional defense strategies are profound.

Attack Vectors: How AI is Weaponizing Cybercrime

Cybercriminals are demonstrating remarkable innovation in exploiting AI systems themselves. Security researchers have documented attacks across over 90 organizations where threat actors inject malicious prompts into generative AI tools, specifically targeting credential theft and cryptocurrency fraud. These prompt injection attacks represent a new category of vulnerability that traditional security tools struggle to detect.

The sophistication extends to social engineering tactics, where attackers impersonate trusted AI services to harvest sensitive data. State-sponsored groups, particularly Russia-backed Fancy Bear, are integrating AI capabilities into their operational playbooks, creating hybrid threats that combine traditional espionage techniques with cutting-edge automation.

Machine Learning in Penetration Testing: A Double-Edged Sword

While AI poses significant threats, it's simultaneously revolutionizing defensive cybersecurity practices, particularly in penetration testing. Machine learning algorithms are enabling security professionals to conduct more comprehensive, efficient, and realistic security assessments.

Modern ML-powered penetration testing tools can analyze network architectures, identify potential attack paths, and simulate complex multi-stage attacks with minimal human intervention. These systems learn from each engagement, continuously improving their ability to discover novel vulnerabilities and attack vectors.

Content is being updated. Check back soon.

Understanding the AI Security Threat Taxonomy

To effectively defend against AI-powered attacks, security professionals must understand the evolving threat taxonomy. AI security threats generally fall into several categories:

Adversarial AI Attacks: These involve manipulating AI models through carefully crafted inputs designed to cause misclassification or system failures. Attackers exploit the mathematical foundations of machine learning algorithms to create inputs that appear benign but trigger malicious behaviors.

Data Poisoning: Threat actors contaminate training datasets used by AI systems, causing models to learn incorrect patterns or behaviors. This can lead to compromised decision-making in security systems that rely on machine learning for threat detection.

Model Extraction: Sophisticated attackers attempt to reverse-engineer proprietary AI models through systematic querying, potentially exposing intellectual property or creating shadow models for malicious purposes.

The Automation Revolution

Automated cyber attacks powered by AI represent perhaps the most significant shift in the threat landscape. These systems can operate continuously, learning from failed attempts and adapting strategies in real-time. Unlike human attackers who require rest and may make errors due to fatigue, AI-powered attack systems maintain consistent performance and can simultaneously target multiple organizations.

The economic implications are staggering. AI automation allows cybercriminal organizations to scale operations exponentially while reducing operational costs. A single AI-powered attack framework can potentially target thousands of organizations simultaneously, each with customized approaches based on machine learning analysis of target-specific vulnerabilities.

As we progress through 2024, the intersection of artificial intelligence and cybersecurity continues to evolve rapidly. Organizations must prepare for a future where both attackers and defenders leverage increasingly sophisticated AI capabilities. Understanding these emerging threats and the role of machine learning in modern penetration testing is crucial for developing effective security strategies in this new era.

Machine Learning Penetration Testing: Tools and Techniques Transforming Security Assessments

The integration of machine learning into penetration testing has fundamentally altered how security professionals approach vulnerability assessment and exploitation. Traditional penetration testing relied heavily on manual processes and predefined scripts, but ML algorithms now enable dynamic, adaptive testing methodologies that can identify complex attack vectors previously undetectable by conventional methods.

Automated Vulnerability Discovery Through AI

Modern machine learning penetration testing frameworks leverage neural networks to analyze network traffic patterns, system behaviors, and application responses in real-time. These systems can process vast amounts of data simultaneously, identifying subtle anomalies that human testers might overlook. Unlike static vulnerability scanners, ML-powered tools continuously learn from each engagement, building comprehensive knowledge bases that improve detection accuracy over time.

The sophistication of automated cyber attacks has reached unprecedented levels in 2024. Adversarial machine learning techniques now enable attackers to craft payloads that specifically target AI-driven security systems. These attacks exploit the probabilistic nature of ML models, introducing carefully crafted inputs designed to cause misclassification or bypass detection mechanisms entirely.

Advanced Evasion Techniques Using Machine Learning

Cybercriminals are employing generative adversarial networks (GANs) to create polymorphic malware that continuously evolves its signature to avoid detection. This represents a significant escalation in AI security threats, as traditional signature-based detection systems become increasingly ineffective against such adaptive threats.

The following Python implementation demonstrates a basic ML-powered reconnaissance script that adapts its scanning patterns based on target responses:

Implementing AI-Enhanced Penetration Testing

Organizations seeking to leverage machine learning penetration testing capabilities must follow a structured approach to ensure effective implementation while maintaining ethical standards. The process requires careful consideration of data privacy, legal compliance, and technical infrastructure requirements.

The cybersecurity trends 2024 indicate a growing arms race between defensive AI systems and offensive ML techniques. Security teams must now contend with attacks that can adapt in real-time, learning from defensive responses and modifying their approach accordingly. This dynamic has necessitated the development of adversarial-aware security architectures that can detect and respond to AI-powered threats.

Challenges and Ethical Considerations

While AI cybersecurity tools offer unprecedented capabilities, they also introduce new vulnerabilities and ethical dilemmas. The potential for AI systems to be weaponized against the organizations they were designed to protect raises serious concerns about access control, model integrity, and the need for robust governance frameworks.

Machine learning models used in penetration testing can inadvertently expose sensitive information about an organization's infrastructure if not properly secured. Additionally, the black-box nature of many ML algorithms makes it difficult to understand and validate their decision-making processes, potentially leading to false positives or missed vulnerabilities.

The democratization of AI tools has also lowered the barrier to entry for malicious actors, enabling less sophisticated attackers to launch complex, AI-driven campaigns. This trend necessitates a fundamental shift in how organizations approach cybersecurity training and awareness programs.

As we progress through 2024, the intersection of artificial intelligence and cybersecurity continues to evolve rapidly. Organizations must balance the tremendous potential of AI-enhanced security tools with the emerging risks posed by adversarial machine learning techniques. The next phase of this evolution will likely focus on developing more resilient AI systems capable of defending against increasingly sophisticated automated cyber attacks while maintaining the agility and accuracy that make ML-powered penetration testing so valuable.

Defending Against AI-Powered Threats: Future-Proofing Cybersecurity Infrastructure

As AI-powered cyber attacks become increasingly sophisticated and prevalent, organizations must evolve their defensive strategies to match the complexity of these emerging threats. The 89% surge in AI-enabled attacks reported by CrowdStrike, coupled with dramatically reduced breakout times of just 29 minutes, underscores the urgent need for comprehensive AI-aware security frameworks.

The Evolving Threat Matrix

State-sponsored groups like Russia-backed Fancy Bear are pioneering the use of large language models for reconnaissance and social engineering campaigns, while cybercriminals exploit generative AI vulnerabilities across over 90 organizations for credential theft and cryptocurrency fraud. These attacks leverage prompt injection techniques to bypass AI-driven security measures, particularly through sophisticated phishing campaigns that can adapt in real-time to defensive responses.

Implementing AI-Resilient Security Architectures

Organizations must adopt a multi-layered approach to defend against AI cybersecurity threats. This involves deploying machine learning models that can detect anomalous patterns indicative of AI-generated attacks, while simultaneously hardening AI systems against prompt injection and model poisoning attempts.

Essential Defense Components

Modern cybersecurity frameworks require integration of AI-specific security controls, including input validation for AI systems, continuous monitoring of model behavior, and implementation of adversarial training techniques. Security teams must also establish robust incident response procedures specifically designed for AI-related breaches, where traditional forensic approaches may prove inadequate.

Building an AI-Aware Security Program

Establishing effective defenses against automated cyber attacks requires a systematic approach that encompasses both technological solutions and organizational preparedness. The following framework provides a comprehensive roadmap for implementing AI-resilient security measures.

Future Outlook and Emerging Trends

The cybersecurity trends 2024 indicate that AI-powered attacks will continue to evolve, with attackers developing more sophisticated techniques for evading detection and exploiting AI system vulnerabilities. Organizations that proactively implement AI-aware security measures will be better positioned to defend against these emerging threats while leveraging the benefits of AI technology for their own defensive capabilities.

The convergence of offensive and defensive AI capabilities is creating a new paradigm in cybersecurity, where the speed and scale of attacks require equally advanced defensive responses. Success in this environment demands continuous adaptation, comprehensive threat intelligence, and a deep understanding of both AI capabilities and limitations.

Testing Your AI Security Knowledge

Understanding the complexities of AI-powered cyber threats is crucial for cybersecurity professionals. The following assessment will help evaluate your grasp of key concepts and defensive strategies in the evolving landscape of AI cybersecurity.

Conclusion: Embracing the AI Security Challenge

The transformation of penetration testing through machine learning penetration testing techniques represents both an opportunity and a challenge for the cybersecurity community. While AI enables more efficient and comprehensive security assessments, it also empowers attackers with unprecedented capabilities for launching sophisticated, automated attacks.

Organizations must recognize that defending against AI security threats requires more than traditional security measures. It demands a fundamental shift in how we approach cybersecurity, incorporating AI-specific controls, continuous learning mechanisms, and adaptive response strategies. The future of cybersecurity lies not in avoiding AI, but in mastering its application for both offensive testing and defensive protection.

As we move forward, the organizations that successfully navigate this AI-driven security landscape will be those that embrace machine learning as both a tool and a target, developing comprehensive strategies that account for the full spectrum of AI-enabled threats while leveraging AI's defensive potential to create more resilient security infrastructures.

No comments yet. Be the first to share your thoughts!