The Evolution of AI-Powered Cyber Attacks: A New Era of Digital Warfare

The cybersecurity landscape is experiencing an unprecedented transformation as artificial intelligence and machine learning technologies become increasingly sophisticated weapons in the hands of cybercriminals. What was once the realm of science fiction has become a stark reality in 2024, with AI-powered cyber attacks fundamentally reshaping how we understand and respond to digital threats.

The integration of artificial intelligence into cybercriminal operations represents a paradigm shift that security professionals worldwide are scrambling to understand and counter. Unlike traditional cyber attacks that relied heavily on human expertise and manual execution, AI-driven threats operate with unprecedented speed, scale, and sophistication. These attacks can adapt in real-time, learn from defensive responses, and execute complex multi-vector assaults that would have required teams of skilled hackers just a few years ago.

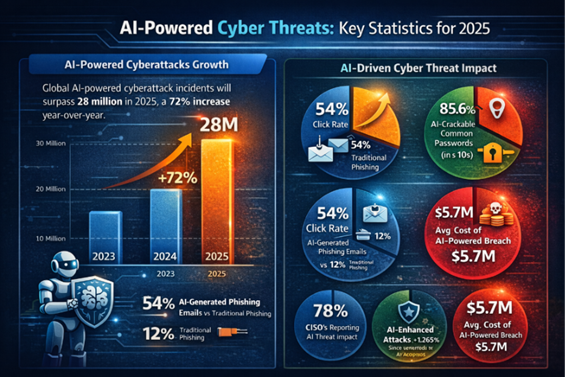

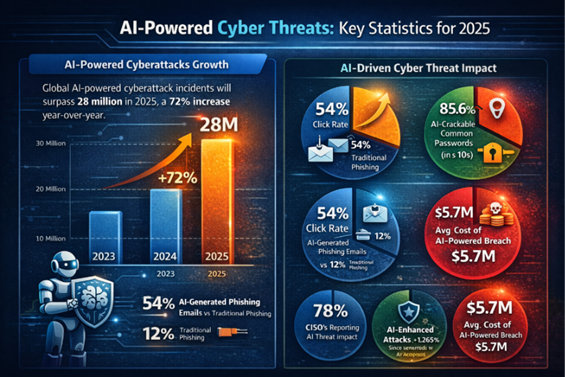

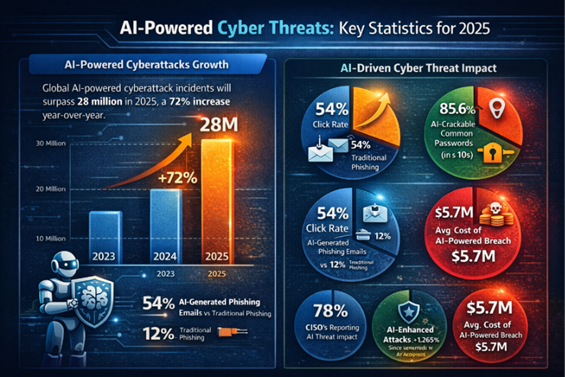

The Alarming Statistics Behind AI Cyber Threats

Recent cybersecurity intelligence reveals the explosive growth of AI-enabled attacks across all sectors. According to CrowdStrike's 2026 Global Threat Report, there has been an 89% surge in AI-enabled attacks over the past year alone. Perhaps more concerning is the dramatic reduction in attack execution times—the average breakout time in 2025 dropped to just 29 minutes, representing a 65% acceleration compared to 2024 figures.

Evolution of Average Cyber Attack Breakout Times (2020-2025): The AI Acceleration Effect

The Mimecast study provides additional insight into the scope of this transformation, revealing that phishing attacks now constitute 77% of all cybersecurity incidents in 2025, up from 60% in 2024. This increase is directly attributed to cybercriminals leveraging generative AI to craft highly realistic phishing messages and sophisticated impersonation schemes that bypass traditional detection methods.

Understanding the AI Advantage in Cybercriminal Operations

The appeal of artificial intelligence for cybercriminals lies in its ability to automate, scale, and optimize attack strategies with minimal human intervention. Machine learning algorithms can analyze vast datasets of potential targets, identify vulnerabilities across networks, and execute coordinated attacks across multiple vectors simultaneously. This technological advantage allows even relatively inexperienced threat actors to launch sophisticated campaigns that would have previously required extensive technical expertise.

AI-powered attacks demonstrate several key characteristics that distinguish them from traditional cybersecurity threats. First, they exhibit adaptive behavior, learning from defensive countermeasures and adjusting tactics in real-time. Second, they operate at machine speed, executing complex attack sequences faster than human defenders can typically respond. Third, they demonstrate unprecedented scale, capable of simultaneously targeting thousands of potential victims with personalized attack vectors.

The Technical Foundation of AI Cyber Attacks

Understanding the technical mechanisms behind AI-powered cyber attacks requires examining how machine learning algorithms are weaponized for malicious purposes. Cybercriminals are increasingly employing neural networks, natural language processing, and deep learning techniques to enhance their attack capabilities.

The sophistication of these attacks extends beyond simple automation. Advanced persistent threat groups are now utilizing AI to conduct reconnaissance, identify high-value targets, craft convincing social engineering campaigns, and even develop new malware variants that can evade traditional signature-based detection systems.

Current Threat Vectors and Attack Methodologies

AI-powered cyber attacks manifest across multiple threat vectors, each leveraging machine learning capabilities to enhance effectiveness. Phishing campaigns now utilize natural language processing to create highly convincing emails that adapt to specific targets, incorporating personal information and contextual details that make detection increasingly difficult.

Malware development has also been revolutionized through AI integration. Modern AI-enhanced malware can modify its behavior based on the target environment, employ polymorphic techniques to avoid detection, and even communicate with command and control servers using machine learning-optimized protocols that mimic legitimate network traffic.

As we delve deeper into this evolving threat landscape, it becomes clear that traditional cybersecurity approaches are insufficient to address the challenges posed by AI-powered attacks. The speed, scale, and sophistication of these threats require a fundamental rethinking of defensive strategies and the development of equally advanced AI-driven security solutions.

Current AI-Powered Attack Vectors and Methodologies

As we delve deeper into the mechanics of AI cyber attacks, it becomes crucial to understand the specific vectors and methodologies that cybercriminals are employing in 2024. The sophistication of these attacks has evolved far beyond simple automation, incorporating advanced machine learning algorithms that can adapt, learn, and evolve in real-time.

Advanced Phishing and Social Engineering

The most prevalent AI-powered threat vector remains advanced phishing, which has seen a dramatic evolution with the integration of large language models. Cybercriminals are now utilizing generative AI to create highly personalized and contextually relevant phishing campaigns that can bypass traditional detection systems. These attacks leverage natural language processing to analyze social media profiles, corporate communications, and public data to craft messages that appear genuinely authentic.

Machine learning algorithms enable attackers to perform real-time A/B testing on phishing campaigns, automatically optimizing subject lines, content, and timing based on recipient behavior patterns. This adaptive approach has resulted in significantly higher success rates, with some AI-generated phishing campaigns achieving open rates exceeding 40% compared to traditional campaigns averaging 15-20%.

AI-Powered vs Traditional Phishing Success Rates (2022-2024)

Automated Vulnerability Discovery and Exploitation

AI-powered vulnerability scanners represent another critical threat vector that has gained prominence in 2024. These systems utilize machine learning to identify zero-day vulnerabilities by analyzing code patterns, system behaviors, and network traffic anomalies. Unlike traditional vulnerability scanners that rely on known signatures, AI-driven tools can predict potential weaknesses before they are publicly disclosed.

The following code snippet demonstrates how attackers might implement a basic AI-powered vulnerability scanner:

Content is being updated. Check back soon.

Deepfake Technology in Cybercrime

The weaponization of deepfake technology has emerged as a sophisticated attack vector, particularly in business email compromise and social engineering attacks. Advanced generative adversarial networks can now create convincing audio and video content that impersonates executives, leading to successful financial fraud and data theft operations.

Recent incidents have shown attackers using AI-generated voice clones to bypass voice authentication systems and convince employees to transfer funds or provide sensitive information. The technology has become so sophisticated that detection requires specialized AI-powered defense systems.

AI-Enhanced Malware and Evasion Techniques

Modern malware is increasingly incorporating machine learning capabilities to enhance its evasion techniques and persistence mechanisms. These intelligent malware variants can analyze their execution environment, adapt their behavior to avoid detection, and even modify their code structure dynamically to evade signature-based detection systems.

Polymorphic malware powered by AI can generate thousands of unique variants while maintaining the same core functionality. This approach makes traditional antivirus solutions ineffective, as each instance appears completely different from a signature perspective.

Adversarial Machine Learning Attacks

A particularly concerning development is the emergence of adversarial attacks specifically targeting AI and machine learning systems. These attacks involve feeding carefully crafted inputs to AI models to cause them to make incorrect decisions or classifications. In cybersecurity contexts, this could mean bypassing AI-powered security controls or causing false positives that overwhelm security teams.

Attackers are developing sophisticated techniques to poison training data, manipulate model outputs, and extract sensitive information from AI systems. These attacks represent a fundamental challenge to AI-based security solutions and require specialized defensive strategies.

The rapid evolution of these attack vectors demonstrates the critical need for organizations to understand and prepare for AI-powered threats. As machine learning becomes more accessible and powerful, we can expect to see even more sophisticated attack methodologies emerge, making proactive defense strategies essential for maintaining cybersecurity resilience.

Defending Against AI-Powered Threats: Strategic Countermeasures and Future Outlook

As AI cyber attacks become increasingly sophisticated and prevalent, organizations must adopt comprehensive defense strategies that leverage both traditional cybersecurity principles and cutting-edge AI-powered protection mechanisms. The rapid evolution of artificial intelligence threats demands an equally dynamic and intelligent response from cybersecurity professionals and organizations worldwide.

AI-Driven Defense Mechanisms

The most effective approach to combating AI-powered cyber attacks involves fighting fire with fire—deploying artificial intelligence and machine learning technologies for defensive purposes. Modern AI-driven security platforms can analyze vast amounts of network traffic, user behavior, and system logs in real-time, identifying anomalies and potential threats with unprecedented speed and accuracy.

Machine learning algorithms excel at pattern recognition, enabling them to detect subtle indicators of compromise that might escape traditional rule-based security systems. These systems continuously learn from new attack patterns, adapting their detection capabilities to stay ahead of evolving threats. Advanced behavioral analytics powered by AI can establish baseline patterns for user and system behavior, immediately flagging deviations that could indicate malicious activity.

Implementation Timeline for AI Security Measures

Organizations planning to enhance their cybersecurity posture with AI-powered defenses should follow a structured implementation approach. The deployment of comprehensive AI security measures typically spans 12-18 months, depending on organizational size and complexity.

Essential Security Checklist for AI Threat Mitigation

To effectively protect against AI-powered cyber attacks, organizations must implement a multi-layered security approach that addresses both technical and human factors. This comprehensive strategy should encompass network security, endpoint protection, user education, and incident response capabilities.

Code Implementation: AI Threat Detection Algorithm

Security teams can implement basic AI-powered threat detection using machine learning libraries. The following example demonstrates a simple anomaly detection system that can identify unusual network traffic patterns indicative of AI-powered attacks:

Market Analysis: AI Cybersecurity Investment Trends

The cybersecurity industry is witnessing unprecedented investment in AI-powered defense solutions. Market data reveals that organizations are significantly increasing their cybersecurity budgets, with particular emphasis on AI and machine learning technologies. This investment trend reflects the growing recognition that traditional security measures are insufficient against modern AI-powered threats.

Future Threat Landscape Predictions

Looking ahead, cybersecurity experts predict that AI cyber attacks will become even more sophisticated and automated. The integration of large language models with attack frameworks will enable cybercriminals to create highly personalized and convincing social engineering campaigns at scale. Additionally, the emergence of adversarial AI techniques will challenge existing machine learning-based defense systems.

The arms race between AI-powered attacks and defenses will likely intensify, with both sides continuously evolving their capabilities. Organizations that fail to adopt AI-enhanced security measures risk being left vulnerable to increasingly sophisticated threats that traditional security tools cannot effectively counter.

Regulatory and Compliance Considerations

As AI becomes more prevalent in both attack and defense scenarios, regulatory frameworks are evolving to address the unique challenges posed by artificial intelligence in cybersecurity. Organizations must stay informed about emerging regulations governing AI use in security applications and ensure their AI-powered defense systems comply with data protection and privacy requirements.

The development of industry standards for AI cybersecurity will play a crucial role in establishing best practices and ensuring interoperability between different AI security solutions. Organizations should actively participate in industry initiatives and adopt standardized approaches to AI security implementation.

Frequently Asked Questions

The battle against AI-powered cyber attacks requires a proactive, intelligence-driven approach that combines advanced technology with skilled human expertise. Organizations that invest in comprehensive AI security strategies today will be better positioned to defend against the evolving threat landscape of tomorrow. Success in this domain demands continuous learning, adaptation, and collaboration across the cybersecurity community to stay ahead of increasingly sophisticated AI-powered threats.

No comments yet. Be the first to share your thoughts!