The rising threat of deepfakes

Deepfakes are synthetic media – images, videos, or audio – that have been manipulated to replace one person’s likeness with another. They’re created using powerful artificial intelligence techniques, primarily deep learning algorithms, hence the name. What started as a novelty has quickly evolved into a serious cybersecurity concern. Early deepfakes were often crude and easily detectable, but advancements in AI are making them increasingly realistic, blurring the lines between what’s real and what’s fabricated.

The technology relies on generative adversarial networks (GANs). Two neural networks compete: one generates the fake content while the other tries to spot the fraud. This competition forces the AI to improve. Researchers are now looking at blood flow analysis within videos to spot inconsistencies, which shows how specific detection has to get to keep up.

The potential for misuse is substantial. Deepfakes can be weaponized for political disinformation campaigns, damaging reputations, or perpetrating financial fraud. Imagine a convincingly faked video of a CEO making damaging statements, or a synthetic audio recording used to authorize fraudulent transactions. These scenarios aren't hypothetical; they're increasingly plausible threats. The ease with which deepfakes can be created and disseminated makes them a potent tool for malicious actors.

AI-native malware and fraud in 2026

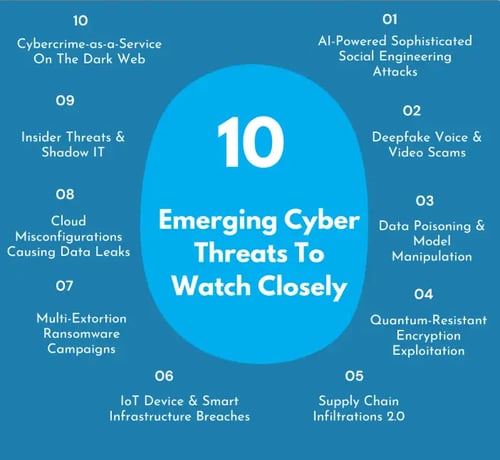

According to a recent report from VIPRE, 2026 will see a surge in AI-native malware and deepfake fraud. This isn't simply about AI being used to detect threats; it's about AI being used to create them. AI-native malware is a particularly concerning development. It’s malware that leverages machine learning to adapt and evolve, making it far more resilient to traditional security measures.

This adaptive capability means the malware can learn to bypass intrusion detection systems, modify its code to avoid signature-based detection, and even target specific vulnerabilities with greater precision. Deepfakes are already being integrated into fraud schemes, particularly voice cloning for business email compromise (BEC) attacks. Attackers can convincingly impersonate executives to trick employees into transferring funds or divulging sensitive information. Synthetic video, used in social engineering, is also on the rise.

The scale of these attacks is also increasing due to automation. Attackers can use AI to automate the creation and distribution of deepfakes, targeting thousands of individuals simultaneously. VIPRE’s report also warns of automated attacks on connected devices, using deepfakes to gain unauthorized access or manipulate system behavior. This broadens the attack surface significantly and makes traditional perimeter security less effective.

Detecting fakes through blood flow

Analyzing blood flow is a specific way to catch deepfakes. It is hard for AI to replicate how light hits human skin or how blood vessels move beneath the surface. These systems look for tiny imperfections in how biological processes are simulated. If the pulse in the neck doesn't match the facial flush, the video is likely a fake.

However, this method isn’t without its limitations. It's computationally expensive, requiring significant processing power to analyze video frames in real-time. It also relies on high-quality video footage; lower resolution or compressed videos may obscure the subtle cues the algorithm needs to detect. Furthermore, AI developers are actively working to improve the realism of skin rendering, and it’s likely that future deepfakes will be able to more accurately mimic blood flow.

Whether blood flow analysis is a viable solution for real-time detection remains to be seen. The challenges to widespread implementation are substantial. It's more likely to be useful as one component of a multi-layered detection system, rather than a standalone solution. It represents an interesting direction for research, but isn’t a silver bullet.

Current detection tech

Several deepfake detection technologies are currently in use, each with its strengths and weaknesses. Facial landmark analysis examines the consistency of facial features – are the eyes and mouth moving naturally? Blink rate analysis looks for anomalies in blinking patterns, as AI-generated faces often have unnatural blink rates. Head pose inconsistencies can reveal discrepancies in how the head is oriented relative to the body.

Artifact detection focuses on identifying visual artifacts introduced by the deepfake generation process, such as blurring, distortions, or color inconsistencies. These methods often rely on machine learning models trained to recognize these patterns. However, these techniques are becoming less effective as deepfake technology improves. Sophisticated deepfakes can now mimic natural facial movements and avoid obvious artifacts.

The majority of these methods are reactive, meaning they rely on identifying characteristics after the deepfake has been created. Proactive approaches, such as developing robust authentication systems, are crucial to prevent deepfakes from being used in the first place. A common failure point for these current technologies is their susceptibility to adversarial attacks – subtle modifications to the deepfake that can fool the detection algorithm.

Deepfake Detection Method Comparison (2026)

| Method | Strengths | Weaknesses | Computational Cost | Vulnerability to Countermeasures |

|---|---|---|---|---|

| Physiological Signal Analysis (e.g., Blood Flow) | Detects inconsistencies in biological signals not easily replicated by AI. | Requires high-quality video; performance impacted by lighting and camera angles. | Moderate to High | Susceptible to countermeasures that simulate physiological signals. |

| Behavioral Biometrics | Focuses on subtle, unique human behaviors (blinking, head movements). | Can be fooled by sophisticated AI mimicking human behavior; requires extensive baseline data. | Low to Moderate | Moderate - AI can learn and replicate behavioral patterns. |

| Artifact Detection (Frequency Domain) | Identifies inconsistencies introduced during the deepfake creation process in frequency patterns. | Can be computationally intensive; may struggle with high-quality deepfakes. | Moderate | Higher - Deepfake generation techniques are improving at removing artifacts. |

| Deep Learning-Based Classifiers | Can learn complex patterns and generalize well to unseen deepfakes. | Requires large, diverse datasets for training; prone to adversarial attacks. | High | High - Vulnerable to adversarial examples specifically crafted to evade detection. |

| Blockchain-Based Verification | Provides a tamper-proof record of content origin and authenticity. | Requires widespread adoption and integration; doesn't detect deepfakes directly, but verifies source. | Low to Moderate | Low - Relies on the integrity of the initial content registration. |

| Lip Sync Analysis | Examines the synchronization between audio and visual lip movements. | Easily defeated by deepfakes with accurate lip synchronization; less effective with varied camera angles. | Low | High - Lip syncing technology is rapidly improving. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Using behavioral biometrics

Behavioral biometrics offer a promising avenue for deepfake detection. This involves analyzing subtle cues in a person’s behavior that are difficult to replicate artificially. These cues include micro-expressions – fleeting facial expressions that reveal underlying emotions, speech patterns, including intonation and rhythm, and body language, like posture and gestures.

The idea is that even a convincingly realistic deepfake may struggle to accurately reproduce these nuanced behavioral characteristics. Analyzing these cues can help distinguish between a real person and a synthetic representation. However, collecting and analyzing behavioral biometric data presents significant challenges. It requires sophisticated sensors and algorithms, and the data can be sensitive and privacy-invasive.

Furthermore, individual behavior varies significantly, making it difficult to establish baseline patterns. Despite these challenges, behavioral biometrics holds considerable potential, particularly when combined with other detection methods. It's an area ripe for further research and development, and could become a vital component of future deepfake defense strategies.

Authentication and verification

Shifting the focus from detection to prevention is paramount. Multi-factor authentication (MFA) is a fundamental security measure that adds an extra layer of protection beyond a simple password. By requiring users to verify their identity through multiple channels – such as a one-time code sent to their phone – MFA can significantly reduce the risk of unauthorized access, even if a deepfake is used to spoof a user’s identity.

Biometric tools like facial recognition are common, but they aren't foolproof. Attackers use synthetic biometrics to bypass these scanners. Watermarking and digital signatures are better alternatives because they provide a verifiable record of where the content came from.

These technologies embed hidden information within the content, allowing recipients to verify whether it has been tampered with. The limitations of these approaches lie in the need for widespread adoption and standardization. Without a consistent framework for watermarking and digital signatures, it’s difficult to ensure that content can be reliably verified. New authentication methods, like decentralized identity solutions, are also being explored.

No comments yet. Be the first to share your thoughts!