How AI changes the attack

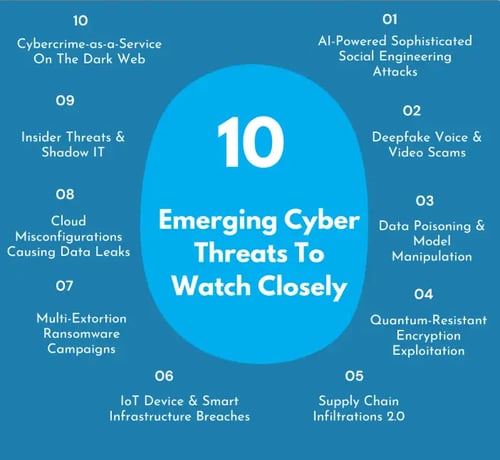

Cyberattacks are no longer simply about speed or volume; artificial intelligence is fundamentally changing how attacks are conceived and executed. We're moving beyond automated brute-force methods to attacks that demonstrate genuine learning and adaptation. This includes the use of deepfakes for incredibly convincing social engineering, automated vulnerability discovery that surpasses human capabilities, and malware that can evolve to evade defenses. It’s a shift from reacting to threats to anticipating them, and frankly, attackers have a head start.

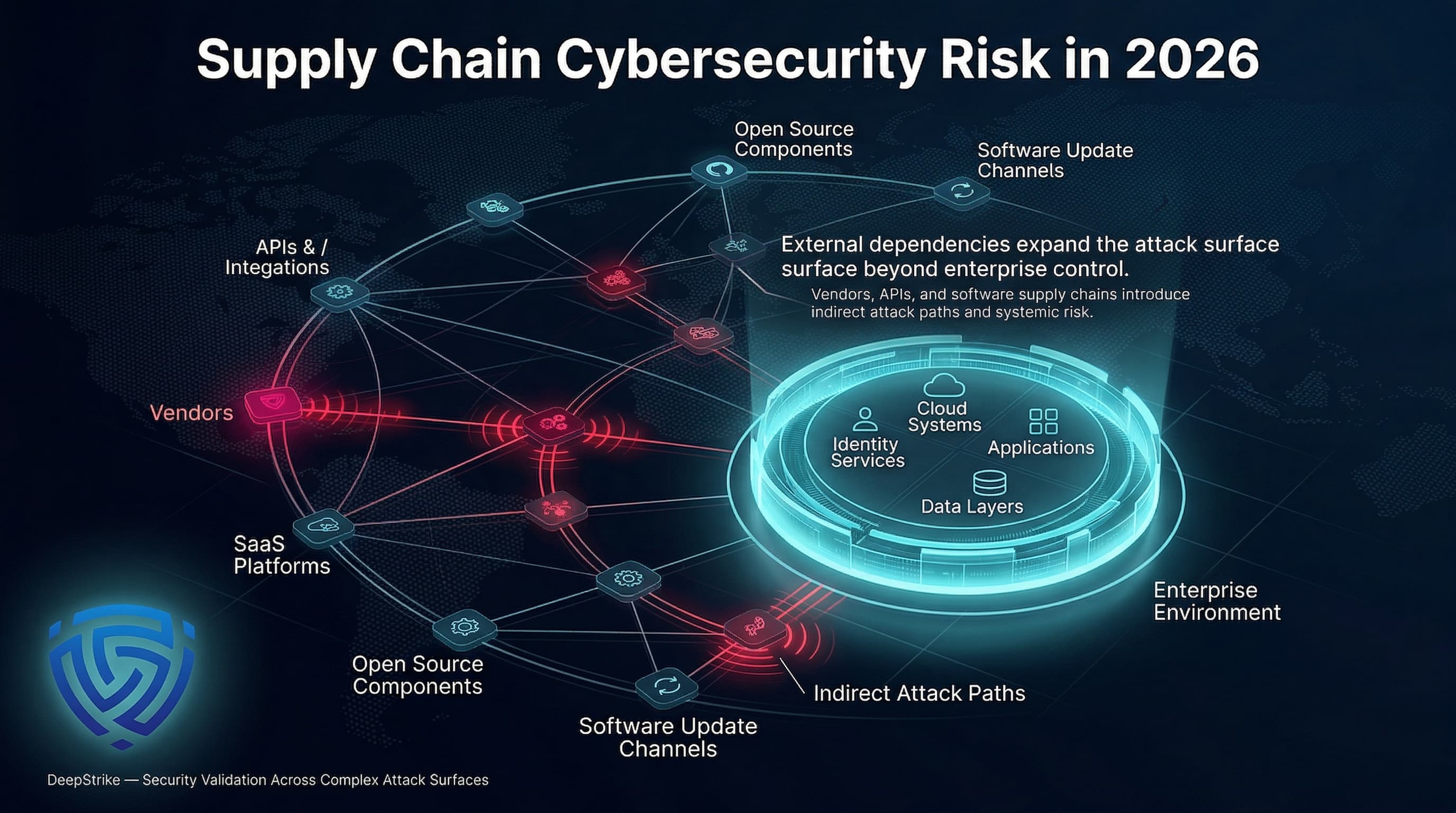

The Department of Defense is acutely aware of these evolving risks. A recent report from the DoD CIO (dated July 14, 2025) emphasizes the increased cybersecurity risks associated with rapid digital modernization and AI adoption. While AI offers significant benefits, the report highlights the necessity of proactive risk management to safeguard critical infrastructure and sensitive data. The rush to integrate AI must be balanced with a clear understanding of the potential attack surface expansion.

Traditional cybersecurity relied heavily on identifying known signatures and patterns. AI-powered attacks actively circumvent this approach. For example, a deepfake video of a CEO requesting a fraudulent wire transfer isn’t detectable by signature-based systems. Similarly, AI-driven vulnerability scanners can uncover zero-day exploits far faster than manual penetration testing. This isn’t just about faster attacks; it’s about attacks that are fundamentally harder to detect and prevent.

Phishing gets personal

Generative AI, particularly large language models, is dramatically lowering the barrier to entry for highly sophisticated phishing campaigns. Creating convincing, personalized phishing emails used to require significant skill and effort. Now, anyone can generate realistic content tailored to specific individuals or organizations with minimal technical expertise. This means a massive increase in the volume of believable phishing attempts.

The shift is from mass-scale, generic phishing to highly targeted attacks. An attacker can feed a language model information scraped from LinkedIn and other sources to craft an email that references a target’s work history, colleagues, and interests. This level of personalization significantly increases the likelihood of success. We’ve already seen examples of campaigns leveraging AI to generate voice clones for vishing attacks – voice phishing – making it even harder to distinguish between legitimate and malicious communications.

Old filters look for bad links or broken English. AI doesn't make those mistakes. It writes perfect, context-aware emails that use psychological tricks to get a click. We can't rely on 'spot the typo' training anymore; we need to look for anomalies in behavior instead of just scanning text.

- Encourage staff to report any email that feels off, even if the sender looks right.

- Multi-factor authentication: Implement MFA on all critical accounts.

- Security awareness training: Regularly train employees on the latest phishing techniques.

Finding flaws at machine speed

AI is accelerating the discovery of vulnerabilities in software and networks. Traditional vulnerability scanning relies on predefined rules and signatures. Machine learning-powered fuzzing techniques, however, can automatically generate and test a wider range of inputs, uncovering edge cases and unexpected behavior that human testers might miss. This significantly reduces the time it takes to find exploitable flaws.

These AI-driven tools can identify zero-day exploits – vulnerabilities unknown to the vendor – before they are publicly disclosed. The speed advantage is considerable. A traditional penetration test might take weeks or months to complete, while an AI-powered scanner can identify critical vulnerabilities in a matter of hours. This proactive approach is essential for staying ahead of attackers.

While The challenge lies in filtering the results and prioritizing remediation efforts, as AI-powered scanners can generate a large volume of findings, some of which may be false positives.

Malware that learns to hide

Malware is becoming increasingly sophisticated through the use of machine learning. Traditional malware relies on static signatures, making it relatively easy to detect with antivirus software. However, modern malware can adapt its behavior to evade detection, learn from its environment, and even repair itself. This makes it significantly more difficult to defend against.

Polymorphic and metamorphic malware have long been challenges for security professionals. Polymorphic malware changes its code with each infection, while metamorphic malware rewrites its entire code. AI amplifies these techniques, allowing malware to evolve more rapidly and intelligently. The malware can analyze its environment and modify its behavior to avoid detection by specific security tools.

The CISA provides guidance on securing AI systems, emphasizing the need for robust data security practices and ongoing monitoring. They recommend implementing strong access controls, encrypting sensitive data, and regularly auditing AI systems for vulnerabilities. Ultimately, a layered defense strategy is crucial for mitigating the risk of adaptive malware.

- Implement endpoint detection and response (EDR) solutions.

- Utilize threat intelligence feeds to stay informed about the latest malware threats.

- Regularly update antivirus software and operating systems.

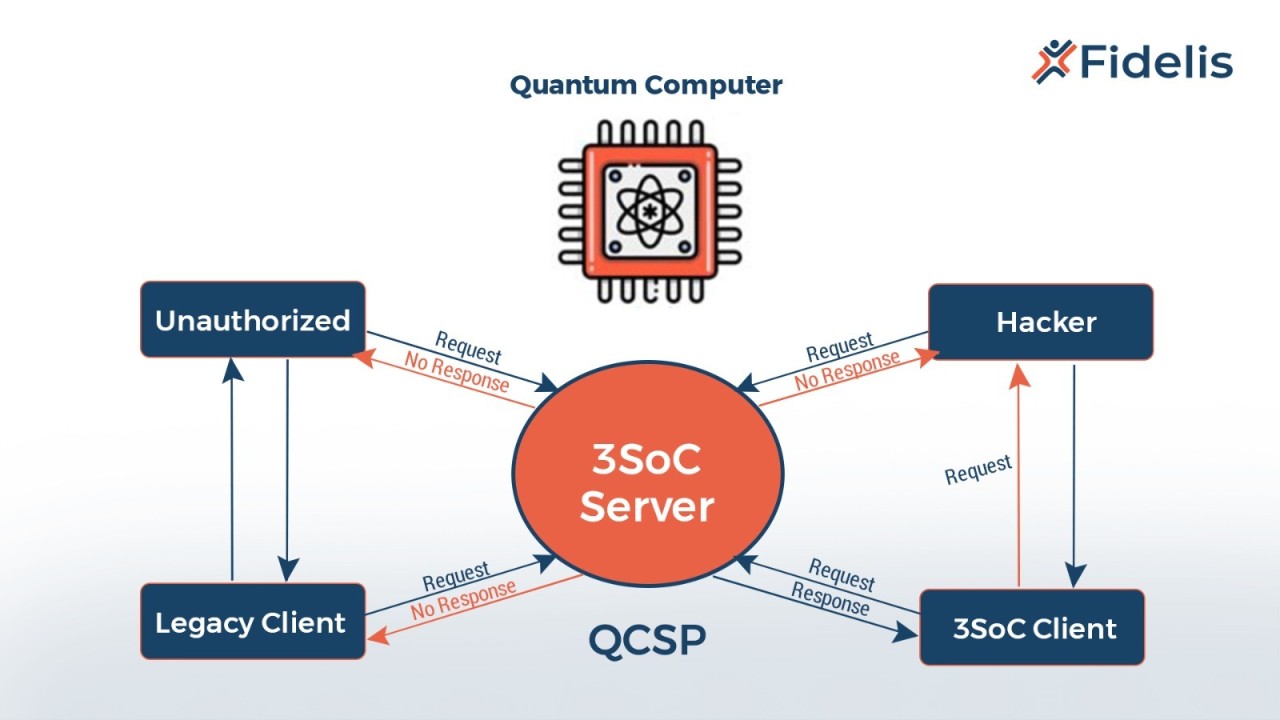

The defensive side of machine learning

Machine learning isn't just enabling attacks; it's also a powerful tool for defense. Intrusion detection systems (IDS) and security information and event management (SIEM) systems are increasingly leveraging machine learning to identify anomalous behavior and detect potential threats. These systems can learn from historical data and identify patterns that indicate malicious activity.

Anomaly detection is particularly effective at identifying novel attacks that haven't been seen before. By establishing a baseline of normal behavior, machine learning algorithms can flag any deviations that might indicate a security breach. This is a significant advantage over traditional signature-based detection, which can only detect known threats. However, it’s important to understand that AI-based security isn't foolproof.

AI systems can be fooled by adversarial examples – carefully crafted inputs designed to evade detection. There's a constant arms race between attackers and defenders, with each side trying to outsmart the other. Relying solely on AI for security is a mistake; human oversight and continuous monitoring are essential. A balanced approach that combines the strengths of both humans and machines is the most effective strategy.

Comparison of Machine Learning-Based Security Approaches (2026 Outlook)

| Detection Approach | Detection Rate | False Positive Rate | Computational Demand | Novel Threat Detection |

|---|---|---|---|---|

| Anomaly Detection | Generally Good | Moderate to Higher | Moderate | Better for identifying zero-day exploits |

| Signature-Based Detection (ML Enhanced) | Good for known threats | Lower | Lower | Limited ability to detect previously unseen attacks |

| Behavioral Analysis | Good, improves over time | Moderate | Higher | Strong for identifying compromised accounts and insider threats |

| Reinforcement Learning for Intrusion Detection | Potentially Very Good | Moderate | Very High | Adaptable, but requires extensive training data |

| Generative Adversarial Networks (GANs) for Threat Simulation | Good for testing defenses | N/A - Used for proactive defense | High | Excellent for identifying vulnerabilities before attacks |

| Deep Learning for Malware Classification | Very Good for known families | Moderate | High | Effective, but can be evaded by polymorphic malware |

| Autoencoders for Network Traffic Analysis | Good for identifying deviations | Moderate | Moderate | Useful for spotting unusual network patterns |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

AI-Driven Threat Hunting

Proactive threat hunting is becoming increasingly important in the face of AI-powered attacks. Security teams can use AI to analyze large datasets – network traffic, system logs, endpoint data – and identify patterns that might indicate a hidden threat. This goes beyond simply reacting to alerts; it's about actively searching for malicious activity.

AI can also be used for security orchestration, automation, and response (SOAR). SOAR platforms automate repetitive tasks, such as investigating alerts and containing threats. This frees up security analysts to focus on more complex and strategic work. Automating threat response reduces the time it takes to mitigate a breach, minimizing the potential damage.

The benefits of automating threat response are significant. Faster response times, reduced workload for security teams, and improved accuracy are all key advantages. However, it’s crucial to carefully configure SOAR platforms to avoid false positives and ensure that automated actions don’t disrupt legitimate business operations.

Building AI Resilience: A Practical Checklist

Improving your organization’s AI security posture requires a multi-faceted approach. Start with data security: implement strong access controls, encrypt sensitive data at rest and in transit, and regularly back up your systems. This is fundamental to protecting your AI models and the data they rely on.

Model hardening techniques are also essential. This includes adversarial training – exposing your models to adversarial examples to make them more robust – and input validation – ensuring that the data fed into your models is clean and trustworthy. Regular model monitoring is crucial to detect drift and anomalies.

Finally, remember that human oversight is paramount. AI-powered security tools are valuable, but they are not a replacement for skilled security professionals. Continuous learning and adaptation are essential to stay ahead of the evolving threat landscape. Regularly review and update your security policies and procedures to reflect the latest threats and best practices.

- Data encryption: Encrypt sensitive data at rest and in transit.

- Access controls: Implement strong access controls to limit who can access AI systems and data.

- Regular backups: Regularly back up your systems to ensure data recovery in the event of a breach.

- Run adversarial training by feeding your models 'poisoned' data to see how they break.

- Input validation: Validate all inputs to your AI models to prevent malicious data from being processed.

No comments yet. Be the first to share your thoughts!