The rising threat of deepfakes

Deepfakes have rapidly moved beyond simple face-swaps and are now becoming a serious cybersecurity concern. What started as a novelty – often seen in entertainment – is evolving into a potent tool for malicious actors. We’re seeing the creation of increasingly convincing synthetic media, encompassing not just video, but also audio and even fabricated documents.

The accessibility of deepfake creation tools is a major driver of this trend. Previously requiring significant technical expertise, generating convincing fakes is becoming easier and cheaper. Software packages are available online that dramatically lower the barrier to entry, allowing individuals with limited skills to create sophisticated forgeries. This democratization of the technology is alarming.

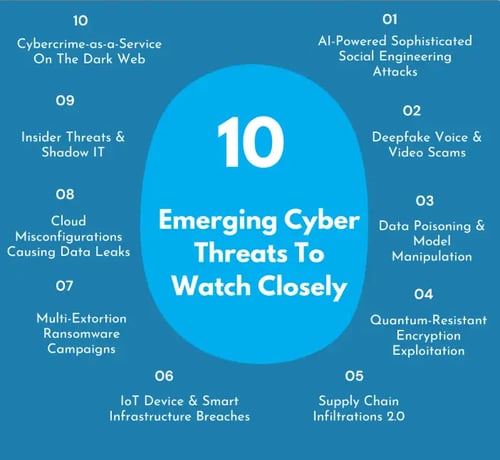

HackerDesk’s April 2026 report shows deepfakes are now standard tools for phishing and financial fraud. We've moved past the novelty phase; these are active weapons used to drain corporate accounts. If your security team isn't treating synthetic audio as a primary threat vector, you're already behind.

How deepfakes are built

At the heart of most deepfake technology are Generative Adversarial Networks, or GANs. These systems pit two neural networks against each other – a generator and a discriminator. The generator creates synthetic content, while the discriminator attempts to distinguish between real and fake data. Through repeated iterations, the generator learns to create increasingly realistic outputs.

Autoencoders also play a significant role, particularly in face-swapping. These algorithms learn to compress and reconstruct data, identifying key features that define a person’s appearance. By swapping the encoded features between two individuals, a convincing fake can be produced. The quality of the result depends heavily on the amount and quality of training data.

Audio deepfakes are also becoming more prevalent, presenting unique challenges. These often rely on techniques like voice cloning, where an AI model learns to replicate a person’s speech patterns and vocal characteristics. Detecting these fakes is particularly difficult, as subtle inconsistencies can be easily overlooked. The combination of visual and audio deepfakes creates an even more convincing – and dangerous – deception.

Why current detection fails

Several deepfake detection techniques are currently in use, but none are foolproof. Physiological signal analysis attempts to detect inconsistencies in subtle cues like blinking rate or blood flow, which are difficult to replicate in synthetic media. Artifact detection focuses on identifying telltale signs of manipulation, such as blurring or distortions in the image or audio.

AI-powered detectors, trained on vast datasets of real and fake content, represent another approach. These systems analyze patterns and anomalies to identify potential deepfakes. However, their effectiveness is limited by the constant evolution of deepfake technology. As creators develop more sophisticated techniques, detectors struggle to keep pace.

It's an arms race. Every time a detection tool gets better, creators find a workaround. VIPRE recently flagged AI-native malware that specifically targets the vulnerabilities in these detection systems. Most current tools are reactive—they only catch what they've seen before, leaving a massive gap for new, 'zero-day' deepfakes.

Using behavior as a fingerprint

Behavioral biometrics offer a promising avenue for more robust deepfake detection. Unlike visual or audio features, which can be convincingly replicated, unique patterns in how people move, speak, and interact are far more difficult to forge. This approach analyzes subtle cues like typing rhythm, gait, and even mouse movements.

For example, a system might analyze the way a person pauses during speech, the specific inflections they use, or the micro-expressions they exhibit. These patterns are deeply ingrained and difficult for an AI to mimic accurately. This is because they are rooted in a person’s individual physiology and lived experience.

However, behavioral biometrics aren't without their challenges. Data privacy is a major concern, as collecting and analyzing this type of information raises ethical questions. Furthermore, individual behavior can vary based on context and emotional state, potentially leading to false positives. Careful consideration needs to be given to data security and user consent.

AI-Powered Countermeasures: The Next Generation

The future of deepfake detection lies in advanced AI-powered countermeasures. Emerging methods go beyond simply identifying fakes and aim to understand why a system flags something as suspicious. This is where explainable AI (XAI) comes into play. XAI provides insights into the decision-making process of the AI, allowing humans to validate the results and build trust.

Federated learning is another exciting development. This technique allows detection models to be trained on decentralized data sources without compromising data privacy. Instead of collecting sensitive data in a central location, the model is trained locally on each device, and only the learned parameters are shared. This approach addresses privacy concerns and allows for more diverse training datasets.

We’re also seeing research into using AI to analyze the creation process of deepfakes – looking for subtle artifacts or inconsistencies in the underlying algorithms. This approach aims to identify the tools and techniques used to create the fake, providing valuable clues for detection. The combination of XAI and federated learning is particularly powerful, offering both accuracy and privacy.

The key is to move beyond pattern recognition to genuine understanding. If a system can explain why it believes something is a deepfake, it’s far more likely to be accurate and trustworthy. This also allows for continuous improvement as new deepfake techniques emerge.

Hardening the enterprise

Protecting your organization from deepfake threats requires a layered approach. Employee training is paramount. Staff need to be educated about the risks of deepfakes and how to identify potential scams. This includes recognizing suspicious requests, verifying the identity of individuals, and being wary of unsolicited communications.

Implementing media authentication protocols is also crucial. This involves using technologies like digital watermarks or blockchain-based verification systems to ensure the authenticity of digital content. However, these technologies are not yet widely adopted and can be circumvented.

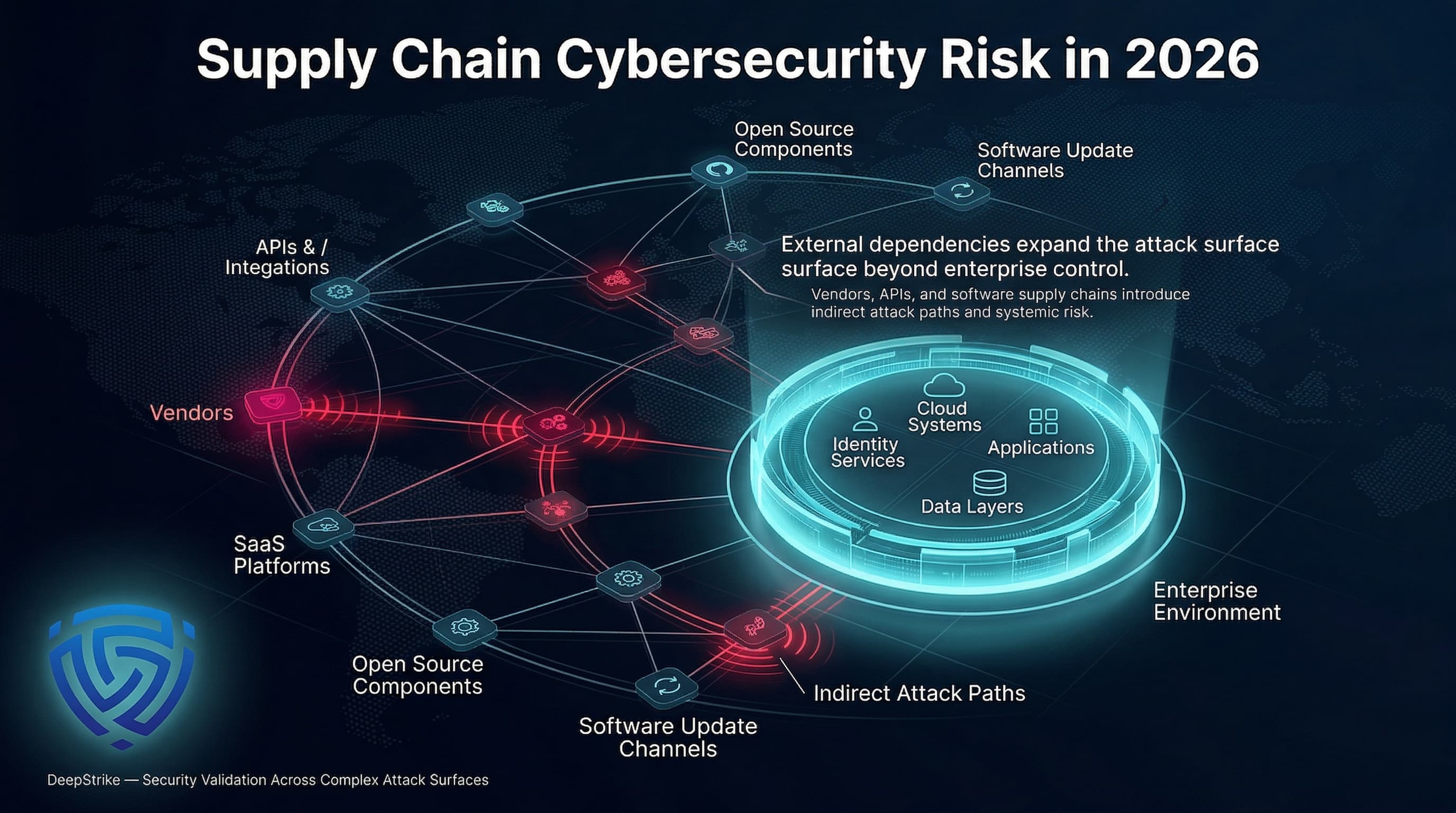

Integration with existing security infrastructure is essential. Deepfake detection tools should be integrated with your email security systems, social media monitoring platforms, and fraud detection systems. HackerDesk’s report on cyber threats in 2026 highlights the importance of robust endpoint protection and network segmentation. These measures can help to contain the damage if a deepfake attack is successful.

Organizations should also establish clear policies for handling potentially compromised data and reporting suspicious activity. A proactive and vigilant approach is the best defense against this evolving threat.

The Legal and Ethical Landscape

The legal implications of deepfakes are complex and evolving. Deepfakes can be used to commit defamation, fraud, and intellectual property violations. Victims may have legal recourse, but proving damages and identifying the perpetrators can be challenging. The legal framework surrounding deepfakes is still catching up with the technology.

Ethical considerations are equally important. Deepfake detection systems have the potential for bias, leading to false positives and unfairly targeting individuals. There’s also the risk of censorship, as legitimate content could be mistakenly flagged as fake. A careful balance must be struck between security and freedom of expression.

Legal frameworks are lagging. While victims can technically sue for defamation or fraud, identifying a perpetrator behind a decentralized AI model is a nightmare. Companies need to set internal policies on data accountability now rather than waiting for the courts to catch up.

No comments yet. Be the first to share your thoughts!