The quantum threat

For decades, the security of our digital world has rested on the mathematical difficulty of certain problems. Specifically, problems like factoring large numbers – the basis for RSA encryption – and calculating discrete logarithms – the foundation of Elliptic Curve Cryptography (ECC). These algorithms protect everything from online banking to government communications. But this security is not guaranteed forever.

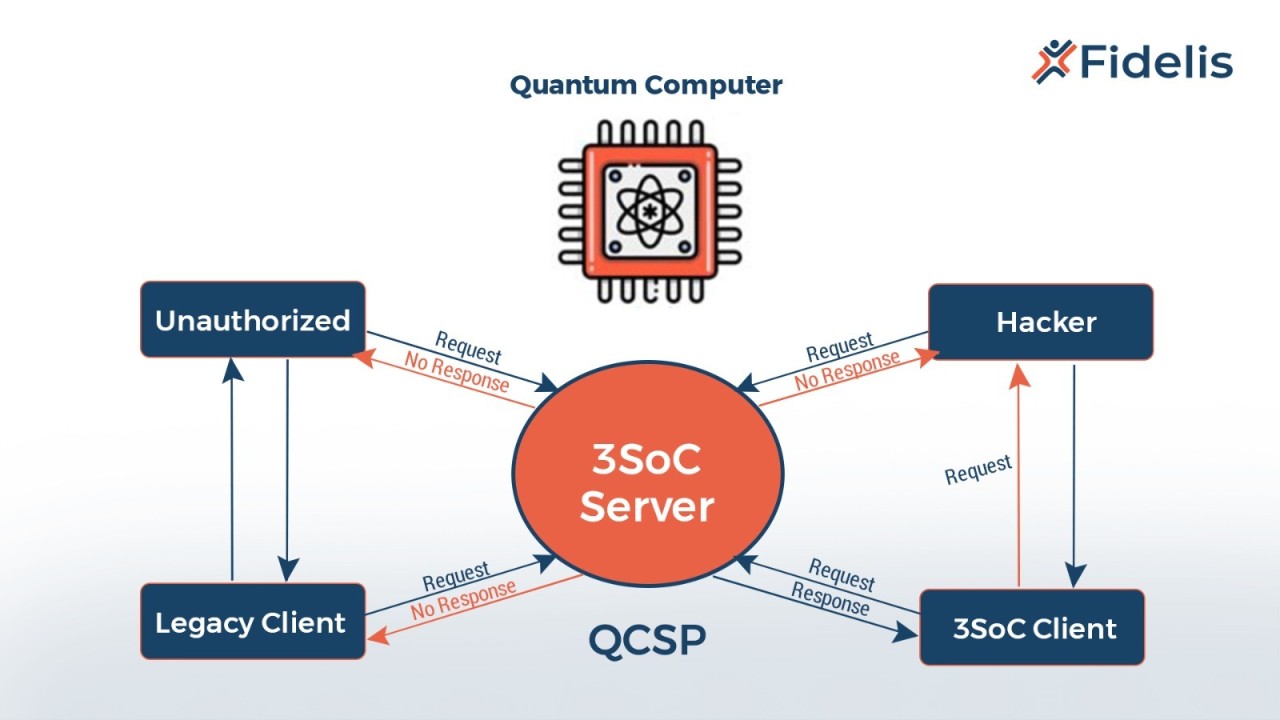

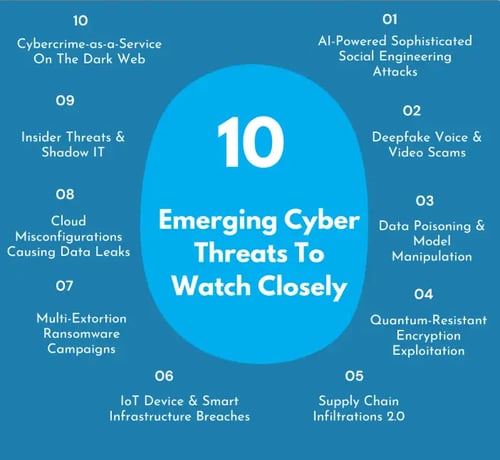

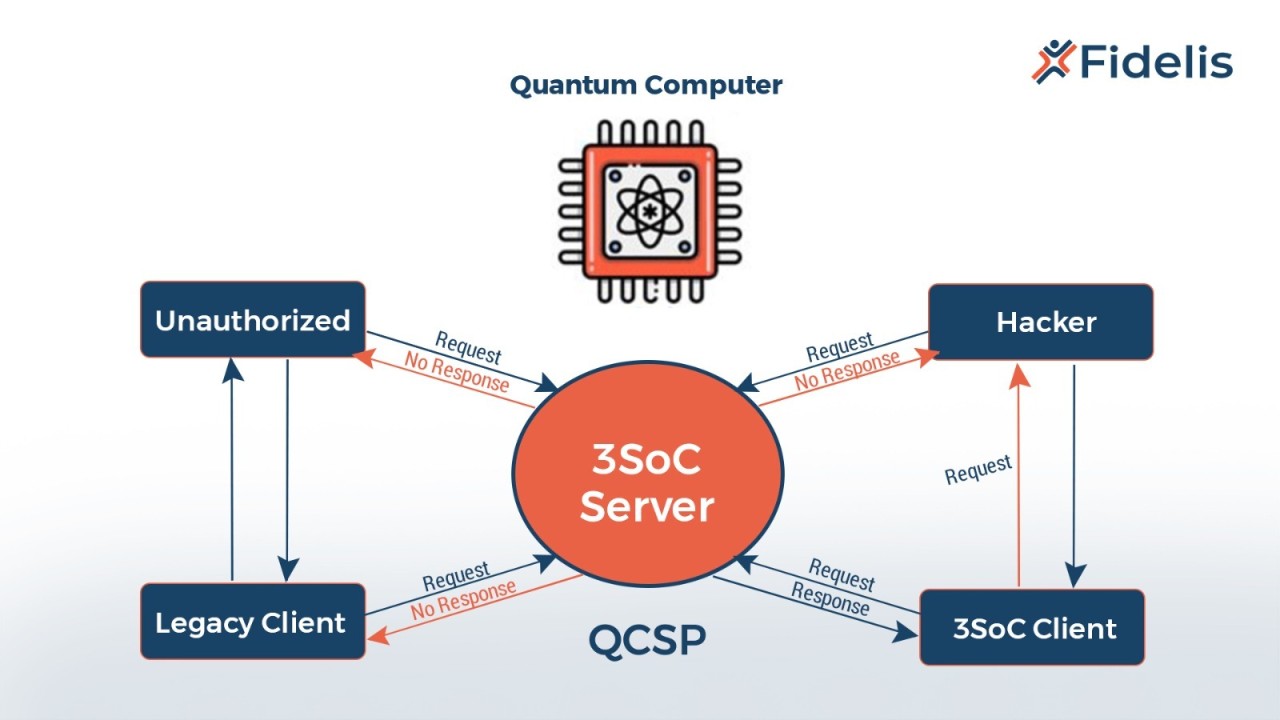

The emergence of quantum computing poses a significant threat. Unlike classical computers that store information as bits representing 0 or 1, quantum computers use qubits. These qubits can exist in a superposition of both states simultaneously, allowing quantum computers to perform certain calculations exponentially faster than their classical counterparts. This speed advantage has serious implications for cryptography.

Shor’s algorithm, developed by mathematician Peter Shor in 1994, demonstrates exactly this threat. It’s a quantum algorithm capable of efficiently factoring large numbers and solving the discrete logarithm problem. This means a sufficiently powerful quantum computer could break RSA and ECC, rendering much of our current encryption useless. The concern isn’t theoretical; development in quantum computing is accelerating.

The timeline often cited for this threat converging is around 2026. This isn't a hard deadline, but a point where experts believe quantum computers will be powerful enough to compromise commonly used encryption. The US government, recognizing this risk, has been actively preparing for this shift. It’s not about a distant future; it’s about a challenge rapidly approaching.

NIST standards for post-quantum security

Recognizing the coming threat, the National Institute of Standards and Technology (NIST) launched a process in 2016 to standardize post-quantum cryptography (PQC). This was a multi-year effort involving public submissions, rigorous analysis, and extensive testing of candidate algorithms. The goal was to identify cryptographic schemes resistant to attacks from both classical and quantum computers.

In 2022 and 2024, NIST announced the first set of standardized PQC algorithms. These included CRYSTALS-Kyber for key encapsulation – the process of securely exchanging encryption keys – and CRYSTALS-Dilithium and FALCON for digital signatures, used to verify the authenticity and integrity of data. These selections weren’t made lightly; they represent the culmination of years of research and scrutiny.

NIST chose CRYSTALS-Kyber for its speed. CRYSTALS-Dilithium and FALCON provide different signature methods to resist various attacks. These aren't just the 'best' options; they are a suite of tools with different trade-offs for specific security needs.

Other candidates are still under review for future rounds. Flexibility is necessary as research evolves, but these initial standards give us a starting point for the transition.

How the new algorithms work

The new NIST standards represent a departure from the number-theoretic foundations of RSA and ECC. Instead, they rely on different mathematical problems believed to be hard even for quantum computers. CRYSTALS-Kyber, for example, is based on lattice-based cryptography. This approach involves finding short vectors within a high-dimensional lattice, a problem thought to be computationally intractable.

Lattice-based cryptography offers strong security guarantees and relatively good performance. However, it does come with larger key and ciphertext sizes compared to traditional methods. This can be a consideration for bandwidth-constrained environments. CRYSTALS-Dilithium and FALCON, on the other hand, are signature schemes based on different mathematical structures.

CRYSTALS-Dilithium uses a "Module-LWE’ problem, another lattice-based approach, to create digital signatures. FALCON employs a different technique, using a ‘Fast Fourier Lattice" structure. Both provide robust digital signature capabilities, but they differ in their performance characteristics and key sizes. FALCON, notably, aims for smaller signature sizes.

These algorithms aren’t simply replacements for RSA and ECC; they operate on different principles. Understanding these differences is crucial for effective implementation. While the underlying math is complex, the key takeaway is that they rely on problems believed to be resistant to attacks from both classical and quantum computers, offering a path toward long-term digital security.

Migration timelines and hurdles

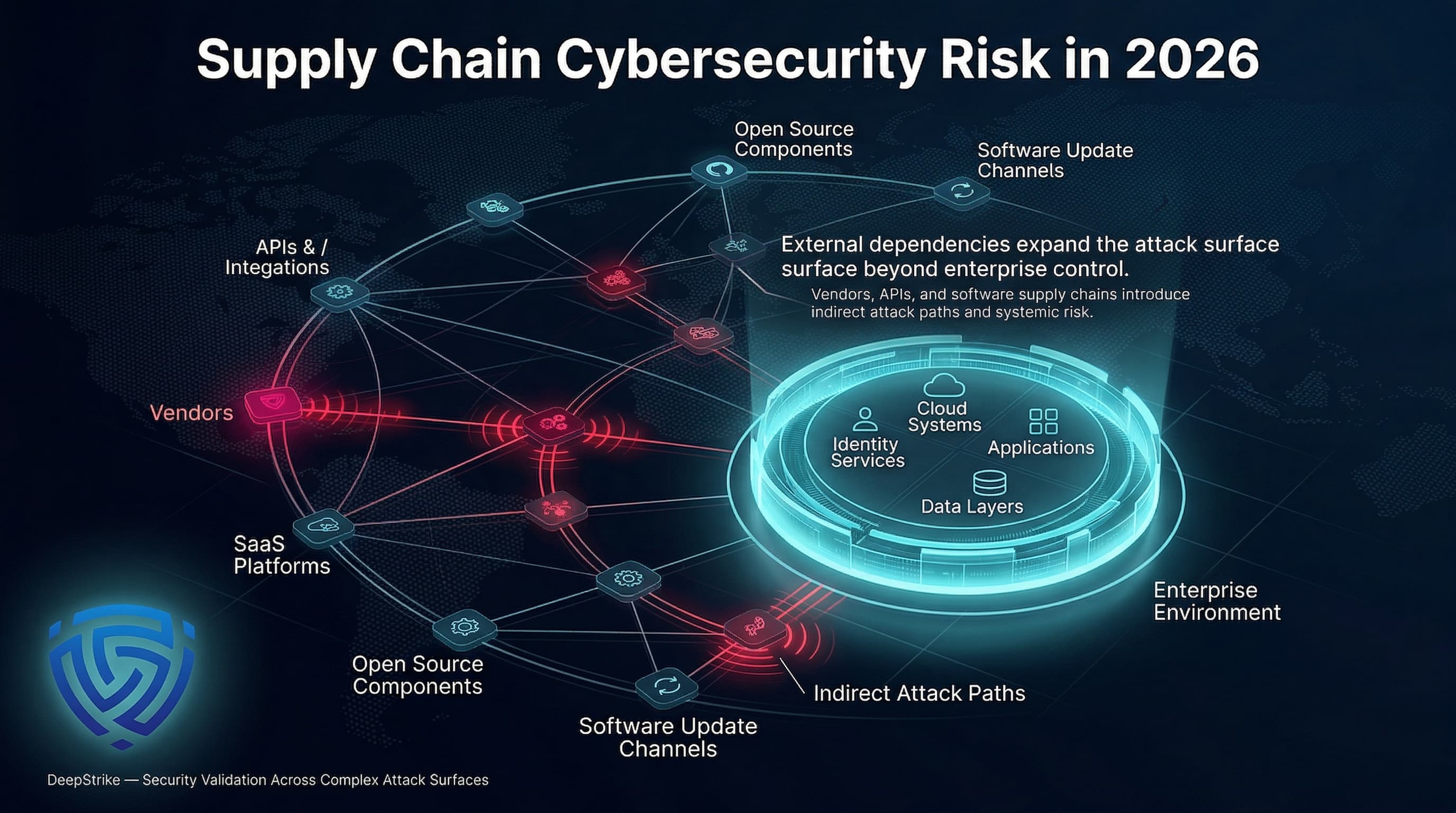

Migrating to PQC isn’t a simple software update. It requires a comprehensive overhaul of cryptographic infrastructure. Software needs to be updated to support the new algorithms, hardware security modules (HSMs) may need to be replaced, and communication protocols must be adapted. This is a significant undertaking, demanding substantial resources and expertise.

The cost of this transition will be considerable. Organizations will need to invest in new technologies, training, and testing. The complexity arises from the widespread use of cryptography in countless systems and applications. Identifying all affected areas and coordinating the upgrade process is a major challenge. As the defense.gov source details, this is a large-scale effort.

Different migration strategies are possible. A hybrid approach, where PQC algorithms are used alongside existing classical algorithms, offers a gradual transition path. Phased rollouts, prioritizing critical systems and applications, can mitigate risk. Another strategy is to focus on "crypto-agility" – designing systems that can easily switch between different cryptographic algorithms.

Given the scale of the task, a complete transition by 2026 is unlikely for many organizations. However, starting the planning and implementation process now is essential. Prioritizing long-lived secrets and systems with high security requirements is a good starting point. Proactive preparation is the best defense against the quantum threat.

Impact on common protocols

The shift to PQC will have a ripple effect across many common protocols that rely on cryptography. Transport Layer Security (TLS/SSL), the foundation of secure web communication (HTTPS), will need to be updated to support the new algorithms. This will require changes to web servers, browsers, and operating systems.

Secure Shell (SSH), used for secure remote access, will also require updates. Similarly, Virtual Private Networks (VPNs) will need to incorporate PQC algorithms to maintain their security. The impact isn’t uniform; some protocols are more easily adapted than others. Protocols designed with crypto-agility in mind will be simpler to upgrade.

For system administrators and developers, this means understanding the implications of PQC for their applications and infrastructure. It requires careful planning, testing, and deployment. Ignoring this issue could leave systems vulnerable to quantum attacks. The complexity lies in ensuring backward compatibility and interoperability.

The good news is that work is already underway to integrate PQC into existing protocols. For example, the Internet Engineering Task Force (IETF) is actively developing standardized PQC profiles for TLS 1.3. However, widespread adoption will take time and coordination.

Current implementations

Several libraries and tools are emerging to facilitate the implementation of PQC algorithms. Open Quantum Safe (OQS) is a project providing open-source implementations of various PQC algorithms, including those standardized by NIST. This allows developers to experiment with and integrate PQC into their applications.

Performance characteristics of these implementations vary. Lattice-based cryptography, while secure, generally has higher computational costs and larger key sizes than traditional algorithms. Optimizing these implementations for different platforms and use cases is an ongoing effort. Testing and benchmarking are crucial to ensure acceptable performance.

The OQS project is the primary resource for now. Cloud providers are also starting to offer PQC support, giving developers early access to these tools before they become standard in mainstream SDKs.

It’s important to note that PQC implementations are still relatively new and evolving. Thorough testing and validation are critical to ensure their security and reliability. As the field matures, we can expect to see more refined and optimized implementations become available.

Resources

The NIST Post-Quantum Cryptography website provides details on the standardization process and the selected algorithms.

Academic papers and industry articles offer further insights into the technical aspects of PQC. Exploring resources from organizations like the Internet Engineering Task Force (IETF) can provide updates on protocol standardization efforts. HackerDesk also provides ongoing coverage of cybersecurity topics, including quantum-resistant cryptography.

Here are some additional links to explore:

- NIST PQC Website:

No comments yet. Be the first to share your thoughts!