The Accelerating Timeline: AI’s Role in Cyberattacks by 2026

The integration of artificial intelligence into cybersecurity is no longer a distant prospect; it’s happening now, and the pace is accelerating. Both attackers and defenders are experimenting with machine learning, but the offensive applications are developing with alarming speed. The Department of Defense (DoD) and CISA report that attackers are already using machine learning to bypass standard firewalls. By 2026, we can expect a landscape dramatically different from today’s.

Currently, many attacks rely on relatively simple, rule-based techniques. But this is changing. AI is enabling attackers to automate tasks, scale their operations, and evade traditional security measures. The accessibility of AI tools is a major factor. You don’t need a team of PhDs to leverage powerful machine learning algorithms; pre-trained models and cloud-based services are lowering the barrier to entry for malicious actors. The DoD’s July 2025 Cybersecurity Risk Management Tailoring Guide highlights this accessibility as a primary concern.

The real danger is that these tools are now easy to use. This means a surge in automated attacks, increased personalization of phishing campaigns, and the development of malware that can adapt and evolve in real-time. We’re moving from a world where attacks are crafted by individuals to one where they’re generated by algorithms. It’s a fundamental shift in the nature of cyber warfare.

Looking ahead to 2026, the focus won’t be on if AI will be used in attacks, but how effectively. The challenge isn’t about stopping AI itself, but about defending against the consequences of its application. This requires a proactive and adaptive security posture, one that anticipates and responds to the evolving threat landscape. Ignoring this reality is simply not an option.

Automated Reconnaissance and Vulnerability Discovery: The Rise of the AI Scout

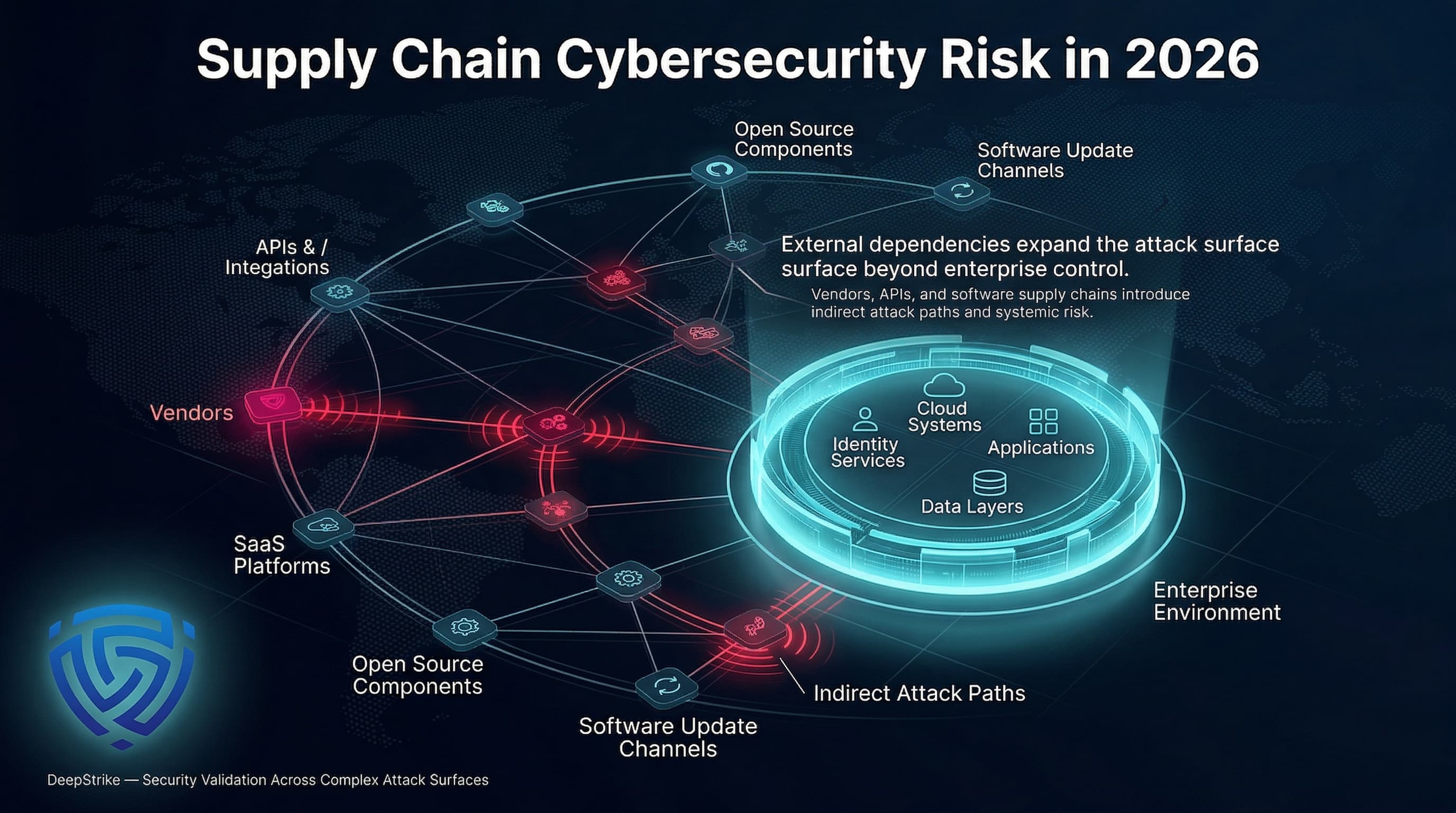

Traditionally, reconnaissance – the initial phase of an attack – has been a manual and time-consuming process. Attackers would use tools like Nmap for port scanning, Nessus for vulnerability scanning, and various OSINT techniques to gather information about their targets. AI is automating all of this, making it faster, more thorough, and less detectable. This is where the concept of the “AI Scout” comes into play: a self-directed system that continuously probes for weaknesses.

Machine learning algorithms are being trained to identify zero-day vulnerabilities – flaws that are unknown to the vendor. These algorithms scan code and network traffic for anomalies that suggest a weakness. They can also predict potential attack vectors by identifying patterns and correlations that humans might miss. This predictive capability is particularly concerning because it allows attackers to proactively target systems before vulnerabilities are even publicly disclosed.

What sets AI-driven reconnaissance apart is its adaptability. Unlike traditional scanners that follow a pre-defined script, AI can adjust its tactics based on the target’s defenses. If a port scan is detected and blocked, the AI can switch to a different technique or attempt to disguise its activity as legitimate traffic. This makes it much harder to detect and prevent. The DoD report specifically addresses the need to counter these adaptive reconnaissance techniques.

Consider an attacker using an AI-powered OSINT tool. Instead of manually searching social media and public databases, the AI can automatically collect and analyze vast amounts of data to build a detailed profile of employees, identify potential targets, and uncover sensitive information. This information can then be used to craft highly targeted phishing emails or social engineering attacks. The speed and scale of this process are simply unmatched by human capabilities.

- Nmap: A popular network scanning tool.

- Nessus: A widely used vulnerability scanner.

- Shodan: A search engine for internet-connected devices.

🚨 ALERT 🚨Focus on ''Google Drive''.

— Theo (@ai_uncovered) November 10, 2025

119 GB+ All paid Courses 100% Free. Just look at the list.👇

— Google Cloud Training

— Machine Learning

— Cybersecurity Fundamentals

— Ethical Hacking

— DevOps & CI/CD

— Kubernetes & Docker

— Blockchain Development

— Prompt Engineering

—… pic.twitter.com/EEdx18Inqw

AI-Powered Phishing and Social Engineering: Beyond the Nigerian Prince

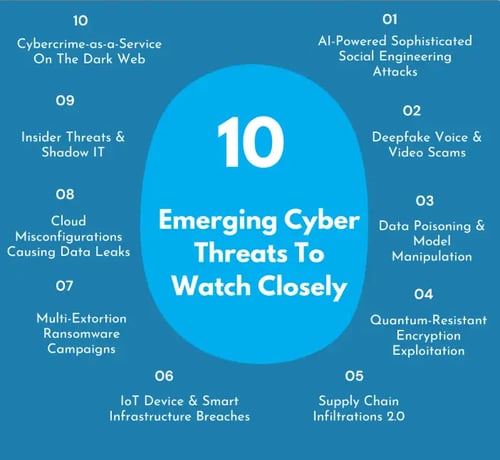

Phishing remains one of the most successful attack vectors, and AI is poised to make it exponentially more effective. Forget the poorly written emails from a supposed Nigerian prince; AI can generate highly personalized and convincing phishing messages tailored to individual targets. Attackers use these models to exploit how people make decisions under pressure.

AI algorithms can analyze a target’s online activity, social media profiles, and even their communication patterns to create a phishing email that appears to come from a trusted source. The message might reference a recent purchase, a shared connection, or a topic that the target is known to be interested in. This level of personalization dramatically increases the likelihood that the target will click on a malicious link or open a malicious attachment.

The rise of deepfakes adds another layer of complexity. Attackers can use AI to create realistic audio and video recordings of individuals, impersonating colleagues, superiors, or even family members. These deepfakes can be used to manipulate targets into divulging sensitive information or transferring funds. The potential for damage is enormous, and detecting these attacks is becoming increasingly difficult.

Traditional security awareness training, while still important, will become less effective against these sophisticated attacks. Simply telling employees to “be careful” is not enough when they are facing messages that are specifically crafted to exploit their individual vulnerabilities. We need to develop new training methods that focus on critical thinking, skepticism, and the ability to identify subtle cues that might indicate a phishing attempt.

Spot the AI Phish: Testing Your Detection Skills

Artificial intelligence is rapidly changing the cybersecurity landscape, and that includes the sophistication of phishing attacks. In 2026, AI-powered phishing will be more convincing than ever. This quiz tests your ability to identify subtle cues that indicate an email might be generated by AI, even if it *looks* legitimate at first glance. Can you tell the difference between a genuine request and a cleverly crafted deception?

The Evolution of Malware: From Polymorphism to Generative Adversarial Networks

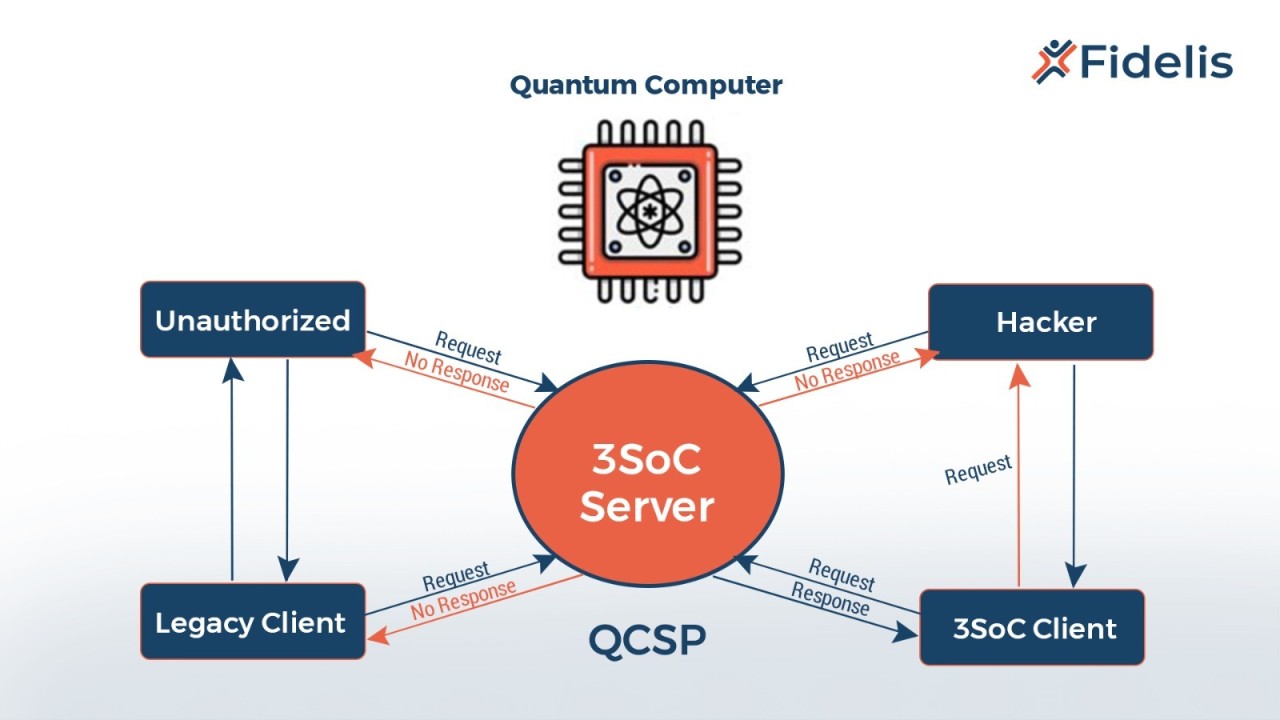

Traditional malware relies on techniques like polymorphism – the ability to change its code to evade detection by signature-based antivirus software. AI takes this concept to a whole new level. Generative Adversarial Networks (GANs) allow attackers to create malware that constantly evolves, making it incredibly difficult for security tools to keep up. A GAN consists of two neural networks, a generator and a discriminator.

The generator creates new variants of the malware, while the discriminator attempts to distinguish between the malicious code and legitimate software. This creates an adversarial loop where the generator continuously improves its ability to evade detection. The DoD's July 2025 guide says that signature-based detection is now useless against code that rewrites itself.

This isn't just theoretical. Researchers have already demonstrated the feasibility of using GANs to create malware that can evade state-of-the-art antivirus engines. The malware can adapt its code in real-time, based on the defenses it encounters. This makes it incredibly resilient and difficult to analyze. The speed of evolution is the key differentiator – traditional polymorphic malware changes slowly, while GAN-generated malware can evolve rapidly.

Beyond evasion, AI can also be used to improve the effectiveness of malware. AI algorithms can analyze system behavior to identify the most vulnerable targets and the optimal methods for spreading the infection. They can also automate the process of exploiting vulnerabilities and escalating privileges. This leads to more targeted and impactful attacks.

Automated Penetration Testing: AI as the Red Team

icularly in the realm of penetration testing. AI-powered tools can automate many of the tasks traditionally performed by human penetration testers, such as vulnerability scanning, exploit development, and report generation. This significantly speeds up the pentesting process and reduces costs.

These tools can identify vulnerabilities, attempt to exploit them, and generate detailed reports outlining the findings. They can also prioritize vulnerabilities based on their severity and potential impact. While these tools aren’t meant to replace human pent testers entirely, they can augment their capabilities and allow them to focus on more complex tasks.

However, the use of AI in penetration testing also raises some important questions. Can we trust the results generated by these tools? Are they prone to false positives or false negatives? And what about the ethical implications of using AI to automate attacks, even in a controlled environment? Human oversight is crucial to ensure the accuracy and validity of the findings.

I anticipate significant innovation in this area over the next few years. We’ll see the development of more sophisticated AI-powered pentesting tools that can adapt to different environments and identify a wider range of vulnerabilities. This will be a critical component of a proactive security strategy.

AI-Powered Pen Testing Tools (2024)

- Arthur - An automated penetration testing platform that discovers and validates vulnerabilities in web applications. It aims to streamline the initial reconnaissance and vulnerability identification phases of a pentest. Learn more

- Nuclei - A fast and customizable vulnerability scanner based on templates. While not solely AI-driven, Nuclei leverages YAML-based templates that can be created and shared, and its speed allows for rapid scanning and identification of potential weaknesses. Learn more

- Detectify - A continuous security testing platform that uses crowdsourced vulnerability research and automated scanning. It incorporates machine learning to prioritize findings and reduce false positives. Learn more

- Probely - An automated penetration testing tool focusing on web application security. Probely uses a combination of techniques, including automated vulnerability scanning and intelligent crawling, to identify and exploit vulnerabilities. Learn more

- StackHawk - A dynamic application security testing (DAST) tool designed for developers. StackHawk integrates into the CI/CD pipeline and uses automated scanning to find vulnerabilities early in the development process. Learn more

- Bright Security (formerly NeuraLegion) - An automated DAST solution that focuses on finding and validating vulnerabilities in web applications. It utilizes machine learning to improve scan accuracy and reduce noise. Learn more

- Lattice - A platform offering both managed penetration testing and an automated attack surface management solution. It uses automated discovery and validation to identify exposed assets and potential vulnerabilities. Learn more

Defensive Strategies: Machine Learning for Threat Detection and Response

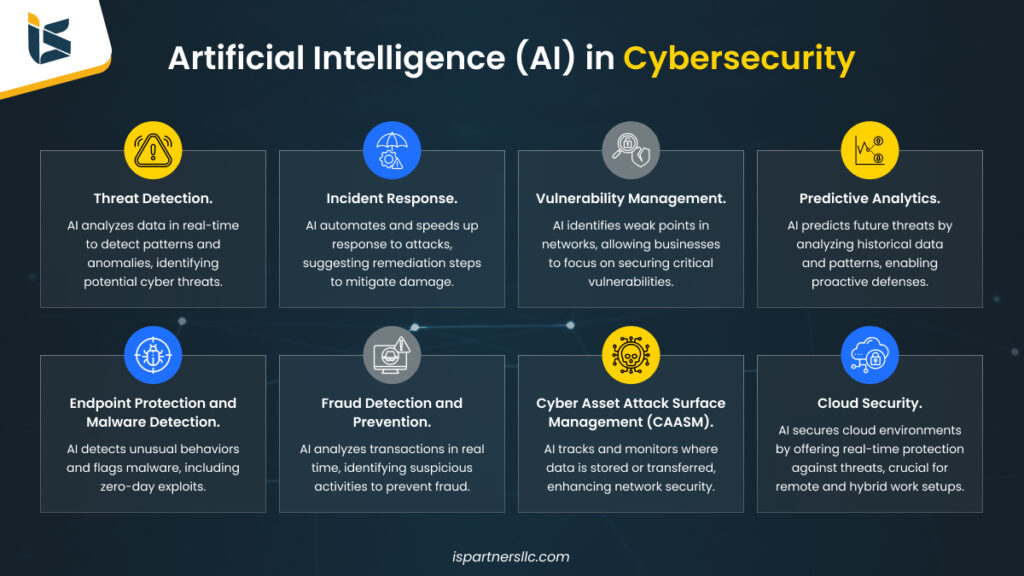

Defending against AI-powered attacks requires a shift in mindset. Traditional security measures, based on signatures and predefined rules, are no longer sufficient. We need to leverage machine learning to detect anomalous behavior, identify patterns of malicious activity, and automate incident response. CISA’s guidance emphasizes a layered security approach, but that’s not new; the implementation needs to be smarter.

Anomaly detection algorithms can identify deviations from normal network traffic patterns, system behavior, and user activity. This can help to detect attacks that might otherwise go unnoticed. Behavioral analysis can be used to profile users and systems, identifying suspicious actions that might indicate a compromise. For example, an algorithm might flag a user who is suddenly accessing files they don’t normally access.

AI can also be used to automate incident response. When a threat is detected, AI can automatically isolate affected systems, block malicious traffic, and initiate remediation procedures. This can significantly reduce the time it takes to contain an attack and minimize the damage. The key is to focus on detecting behavior, not just signatures. A system that can identify malicious activity based on its actions, rather than relying on known patterns, will be much more resilient.

Building effective machine learning models requires access to large amounts of data. Organizations need to collect and analyze data from a variety of sources, including network logs, system logs, and security alerts. They also need to invest in the expertise to build and maintain these models. This is a significant challenge for many organizations, but it’s essential for staying ahead of the evolving threat landscape.

Machine Learning Algorithms for Threat Detection: A Comparative Analysis (Projected 2026)

| Algorithm Type | Accuracy Potential | Deployment Speed | Interpretability | Data Requirements |

|---|---|---|---|---|

| Supervised Learning | High, particularly with labeled datasets. Potential for >95% accuracy in specific, well-defined threat categories. | Generally fast, especially after training. Real-time detection is achievable. | Moderate. Feature importance can be assessed, but understanding complex models can be challenging. | Requires large, accurately labeled datasets for training. Labeling can be resource-intensive. |

| Unsupervised Learning | Moderate to High, effective at anomaly detection. Accuracy varies significantly based on the complexity of the network and the subtlety of attacks. | Fast for anomaly scoring, but may require further investigation to confirm true positives. | Low. Identifying *why* an anomaly is flagged is difficult without further analysis. | Can operate on unlabeled data, reducing the need for extensive manual labeling. |

| Reinforcement Learning | Potentially Very High, especially in dynamic environments. Adapts to evolving threats over time. | Slower initially due to the training process. Performance improves with continued interaction. | Low. Understanding the learned policies can be complex. | Requires a simulated environment or significant real-world data for training and reward function definition. |

| Deep Learning (Neural Networks) | Very High, capable of identifying complex patterns. Accuracy dependent on network architecture and data quality. | Can be slow during initial training, but optimized models can offer fast inference speeds. | Very Low. 'Black box' nature makes it difficult to understand decision-making processes. | Requires extremely large datasets for effective training. Computationally intensive. |

| Hybrid Approaches (e.g., combining Supervised & Unsupervised) | Potentially Highest, leveraging the strengths of multiple algorithms. | Variable, depending on the specific combination and implementation. | Moderate, potentially improved by combining interpretable and less interpretable methods. | Combines the data requirements of the constituent algorithms. |

| Generative Adversarial Networks (GANs) | High for detecting novel attacks by learning the distribution of normal network behavior. | Moderate to high; detection speed depends on the generator and discriminator network complexity. | Low to Moderate. Understanding the features learned by GANs can be challenging. | Requires significant data to train both the generator and discriminator networks. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

The AI Arms Race: Preparing for a World of Constant Adaptation

The fight against AI-powered cyberattacks is not a one-time battle; it’s an ongoing arms race. Attackers will continue to develop new and more sophisticated techniques, and defenders will need to constantly adapt to stay ahead. This requires a commitment to continuous learning, threat intelligence sharing, and investment in AI security research.

The DoD report highlights the importance of building resilient systems that can withstand AI-powered attacks. This means designing systems with multiple layers of defense, incorporating redundancy, and implementing robust monitoring and logging capabilities. It also means embracing a “zero trust” security model, where no user or device is automatically trusted, regardless of its location or identity.

I worry that many organizations are simply not prepared for the scale of this challenge. They lack the resources, the expertise, and the strategic vision to effectively defend against AI-powered attacks. The focus needs to shift from prevention to detection and response. We need to assume that attacks will happen and focus on minimizing the damage.

Threat intelligence sharing is also crucial. Organizations need to share information about emerging threats and vulnerabilities with each other. This will help to improve the collective defense posture and reduce the risk of widespread attacks. The future of cybersecurity depends on our ability to collaborate and adapt.

No comments yet. Be the first to share your thoughts!