The shift in pentesting

Penetration testing has always been a game of cat and mouse, but the rules are changing. For years, security professionals relied on manual testing, supplemented by automated vulnerability scanners. We’re now seeing a significant shift towards incorporating artificial intelligence into the process, a move driven by several converging factors. The most pressing is the widening skills gap in cybersecurity – there simply aren’t enough qualified pentesters to keep pace with the growing number of threats.

The expanding attack surface, fueled by cloud adoption and the proliferation of IoT devices, adds another layer of complexity. Organizations need to test more frequently and across a wider range of systems than ever before. Speed is also becoming critical; traditional pentests can take weeks or even months to complete, while attackers operate on much shorter timelines. AI promises to accelerate the process, delivering faster insights and reducing risk.

AI in security is mostly hype right now. We shouldn't expect these tools to replace humans, but they can handle the grunt work so we can focus on complex logic flaws. I want to look at what actually works today versus what vendors are promising in their slide decks.

How AI differs from basic automation

The terms “automated pentesting” and “AI-powered pentesting” are often used interchangeably, but they represent fundamentally different approaches. Automated pentesting typically involves using tools to scan for known vulnerabilities, enumerate network services, and perform basic attacks. These tools, while valuable, operate based on predefined rules and signatures. They’re excellent at identifying common weaknesses, but they struggle with novel or complex scenarios.

AI-driven pentesting, on the other hand, aims to reason about vulnerabilities, adapt to defenses, and potentially discover zero-day exploits. This is achieved through the application of machine learning (ML) techniques, allowing the system to learn from data, identify patterns, and make informed decisions. As HackerSec.com points out in their recent article on automated vs. AI pentests, the primary benefit of automation is scalability – the ability to test larger and more complex environments more efficiently.

However, true AI goes beyond simply scaling up existing processes. It attempts to replicate the thought process of a human pentester, exploring different attack vectors, evading security controls, and uncovering hidden vulnerabilities. While many tools currently marketed as "AI" are actually sophisticated forms of automation, the potential for genuine AI-driven pentesting is significant. It's about moving from reactive vulnerability detection to proactive threat discovery.

- Automated tools follow rigid rules and signatures.

- AI-Driven Pentesting: Uses machine learning to reason about vulnerabilities and adapt to defenses.

- Scalability: Automation excels at testing large environments.

- Novelty: AI has the potential to discover zero-day exploits.

Automated Pentesting vs. AI Pentesting: A Comparative Overview

| Feature | Automated Pentesting | AI Pentesting |

|---|---|---|

| Vulnerability Discovery | Relies on pre-defined patterns and signatures to identify known vulnerabilities. Coverage is limited to the ruleset it's programmed with. | Leverages machine learning to identify both known and potentially unknown (zero-day) vulnerabilities by analyzing patterns and anomalies in system behavior. Aims for broader coverage. |

| False Positive Rate | Generally has a higher false positive rate due to its reliance on pattern matching, often flagging benign findings as potential issues. | Typically exhibits a lower false positive rate as AI models can learn to differentiate between genuine vulnerabilities and normal system activity through continuous learning and contextual analysis. |

| Adaptability | Limited adaptability. Requires manual updates to vulnerability databases and rule sets to address new threats. | Demonstrates greater adaptability, continuously learning from new data and adjusting its approach to identify evolving threats without constant manual intervention. |

| Scope of Testing | Often focused on specific attack vectors or pre-defined test cases, limiting the breadth of the assessment. | Capable of broader scope testing, dynamically adjusting the attack surface based on observed system responses and potential entry points. |

| Reporting | Reports typically provide a list of identified vulnerabilities with basic severity ratings and remediation recommendations. | Generates more detailed and contextualized reports, including risk scores, potential impact assessments, and prioritized remediation steps, often with supporting evidence. |

| Human Intervention | Requires significant human intervention for configuration, analysis of results, and validation of findings. | Aims to reduce the need for manual intervention, automating many aspects of the pentesting process, but still benefits from expert oversight for complex scenarios. |

| Exploitation Capabilities | Typically limited to exploiting known vulnerabilities with pre-defined exploits. | May include capabilities to attempt exploitation of discovered vulnerabilities, and potentially adapt existing exploits or generate new ones based on identified weaknesses. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Current techniques in the field

Several AI/ML techniques are being applied to penetration testing, each with its own strengths and limitations. Large Language Models (LLMs), like those powering ChatGPT, are proving useful for code analysis and report generation. They can automatically identify potential vulnerabilities in source code, summarize findings, and even suggest remediation steps. The quality of the output depends heavily on the quality of the input and the training data used to build the LLM.

Reinforcement learning (RL) is being explored for fuzzing, a technique that involves feeding a program with random inputs to identify crashes and vulnerabilities. RL algorithms can learn to generate inputs that are more likely to trigger vulnerabilities than random inputs, making fuzzing more efficient. However, RL-based fuzzing is still in its early stages and requires significant computational resources.

Anomaly detection is already standard for monitoring, but it's useful for pentesting too. By flagging weird traffic patterns or log spikes, it helps us decide where to dig first. I'm skeptical about reinforcement learning's role here—it's too resource-heavy—but LLMs are already saving me time on report drafts.

The 2026 tool market

The 2026 market is crowded. Some vendors just slapped an AI label on old scanners, while others built their engines around machine learning. StackHawk is a good example of the latter; they use it specifically to map out API surfaces that traditional crawlers usually miss.

Cobalt.io offers a platform that combines automated scanning with human pentester expertise, using AI to prioritize findings and streamline the testing process. Detectify is another player, leveraging crowdsourced security research and AI-powered scanning to identify vulnerabilities in web applications. Bright Security’s platform uses AI to analyze code and identify security risks, providing developers with actionable insights.

Pentera, formerly known as AttackIQ, utilizes a security validation platform with automated attack simulations powered by AI to continuously assess an organization’s security posture. ImmuniWeb offers a suite of application security testing tools, including AI-powered vulnerability scanning and penetration testing services. Finally, Horizon3.ai provides a platform for autonomous penetration testing, using AI to simulate real-world attacks and identify vulnerabilities.

- StackHawk: Runtime application and API security testing with AI-powered discovery.

- Cobalt.io: Automated scanning combined with human expertise and AI prioritization.

- Detectify: Crowdsourced security research and AI-powered vulnerability scanning.

- Bright Security: AI-driven code analysis for identifying security risks.

- Pentera runs automated attack simulations to check security posture.

- ImmuniWeb: AI-powered vulnerability scanning and penetration testing services.

- Horizon3.ai: Autonomous penetration testing platform.

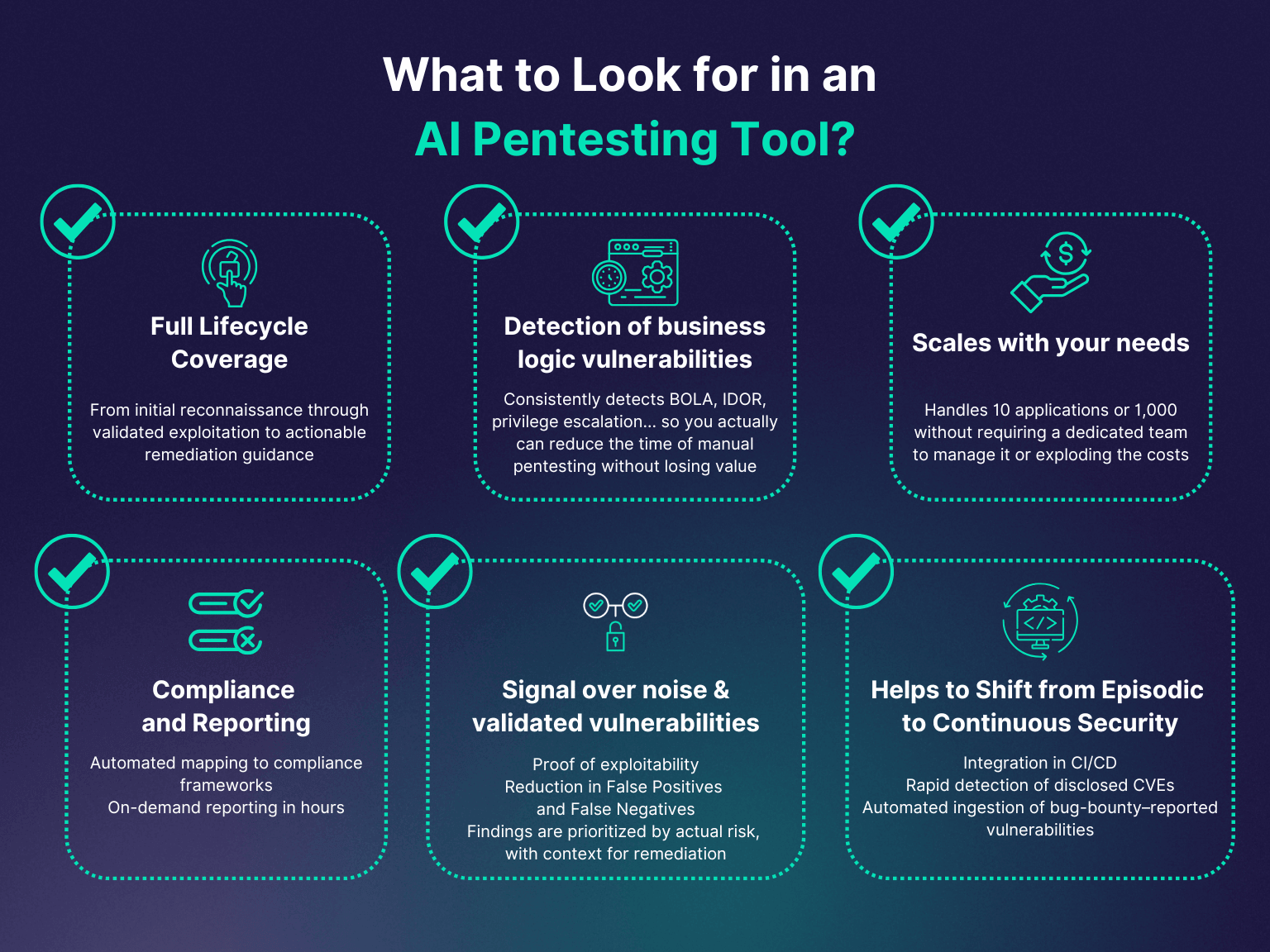

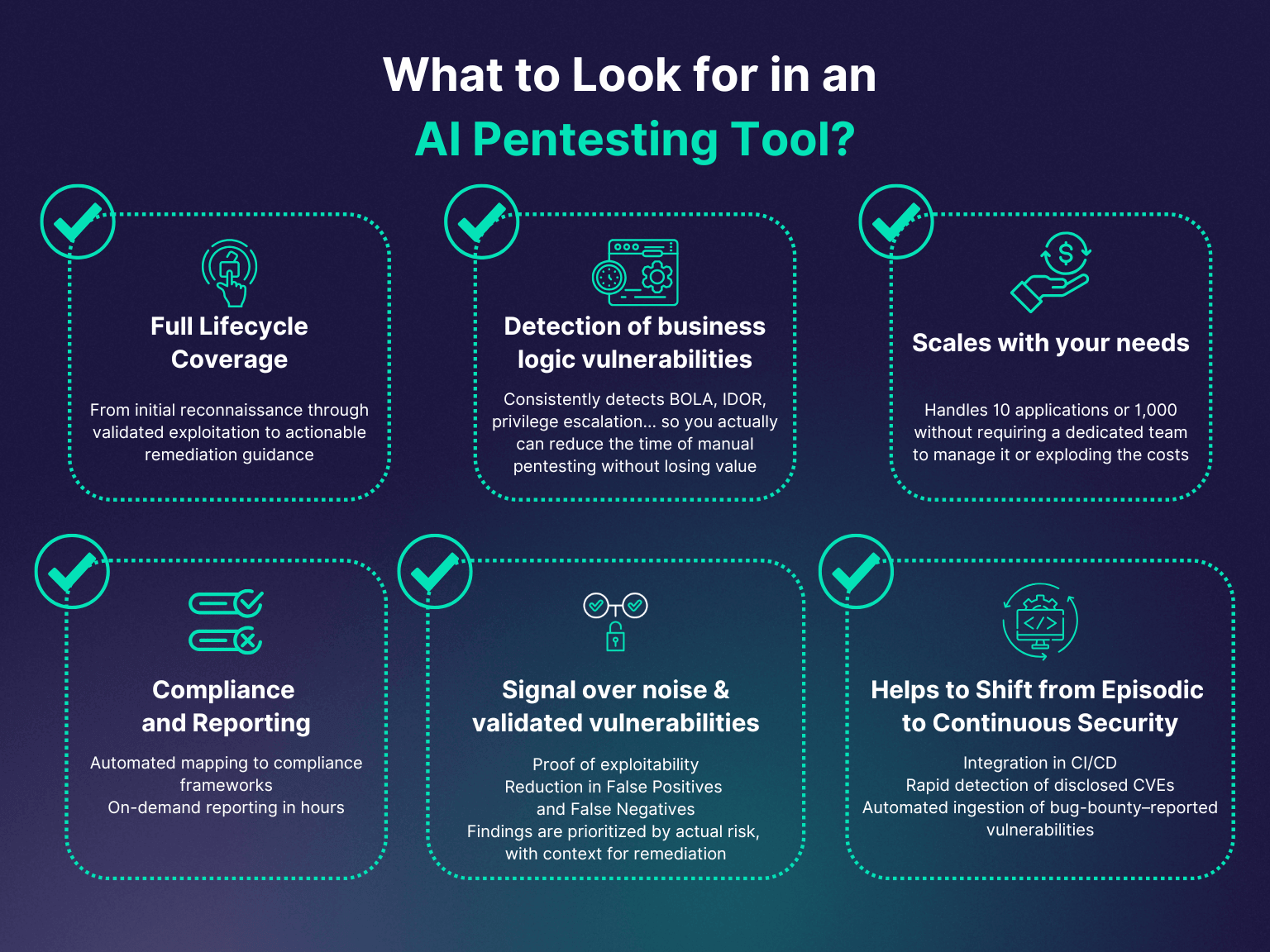

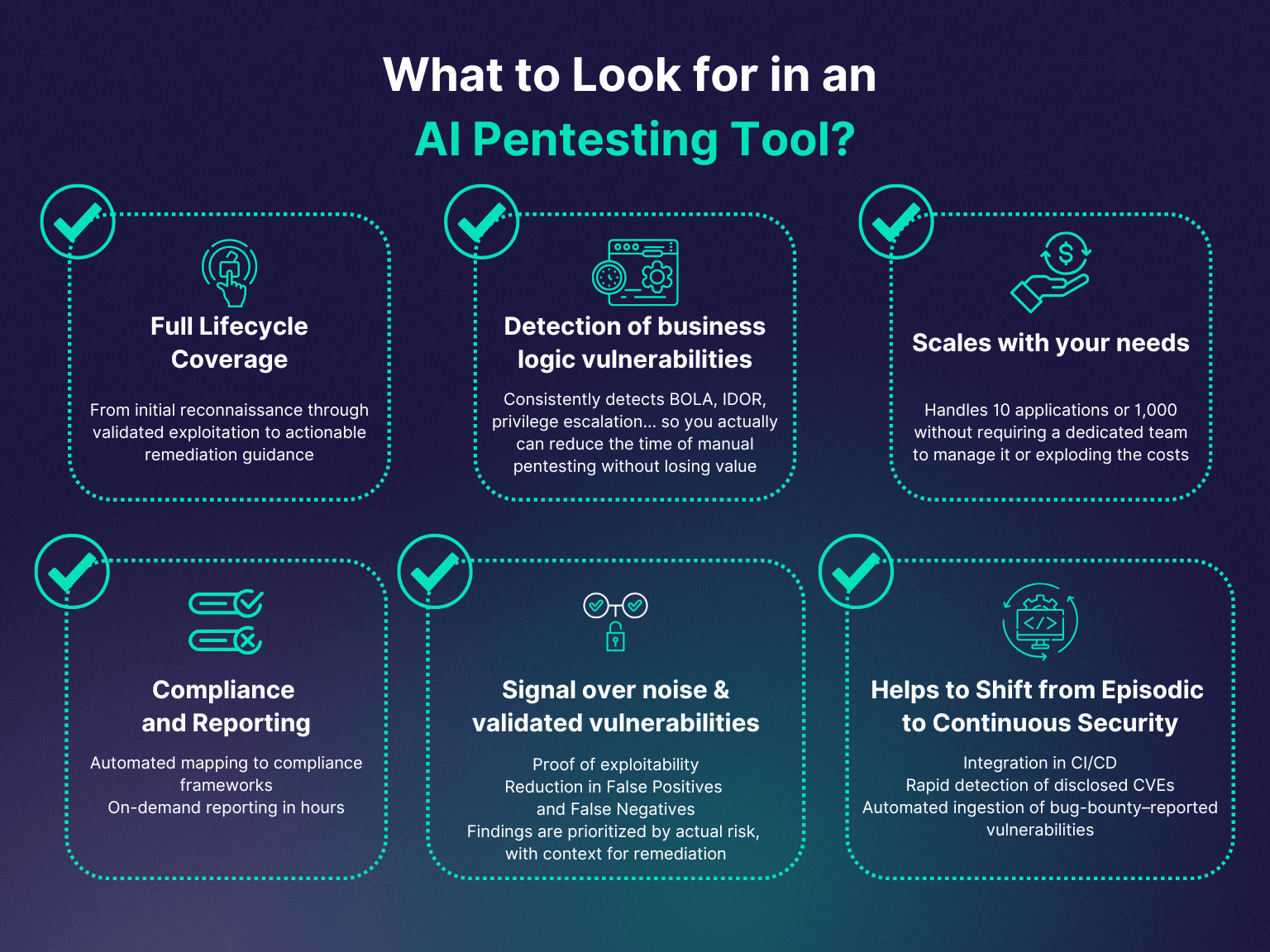

Evaluation Checklist

- Data Privacy & Security - Verify the tool’s adherence to data protection regulations (like GDPR, CCPA) and its methods for handling sensitive data during testing. Understand data residency and encryption practices.

- Explainability of Findings - Assess how clearly the AI explains its identified vulnerabilities. Look for tools that provide detailed reasoning and context, not just a list of issues.

- Integration Capabilities - Determine if the tool integrates with your current security infrastructure, such as vulnerability scanners (e.g., Nessus, OpenVAS), SIEM systems (e.g., Splunk, QRadar), and ticketing systems (e.g., Jira, ServiceNow).

- Scalability - Evaluate the tool's ability to handle large and complex environments. Consider its performance with increasing numbers of assets and users.

- Reporting Features - Examine the quality and customization options of the reports generated. The tool should provide actionable insights and facilitate remediation efforts.

- Attack Vector Support - Confirm the range of attack vectors the AI can simulate, including web application attacks, network vulnerabilities, and social engineering tactics.

- Customization Options - Investigate the level of control available to tailor the AI’s testing parameters, such as scope, intensity, and specific vulnerabilities to target.

A closer look at Cobalt and Bright Security

Let's take a closer look at Cobalt.io and Bright Security. Cobalt.io’s platform differentiates itself by blending the efficiency of automated scanning with the expertise of a global network of vetted pentesters. The AI component focuses on intelligently prioritizing findings, reducing false positives, and streamlining the reporting process. This allows pentesters to focus on the most critical vulnerabilities, improving the overall effectiveness of the testing process. Cobalt’s architecture is designed to be flexible, supporting a wide range of testing methodologies and target environments.

Bright Security takes a different approach, focusing on integrating security testing into the software development lifecycle (SDLC). Their platform uses AI to analyze code as it’s being written, identifying potential vulnerabilities early in the process. This "shift left’ approach can significantly reduce the cost and effort required to fix security issues. Bright Security"s platform offers detailed remediation guidance, helping developers address vulnerabilities quickly and effectively. The tool is particularly strong at identifying vulnerabilities in modern web frameworks and languages.

Both platforms offer robust documentation and APIs, making them easy to integrate into existing security workflows. Cobalt.io is well-suited for organizations that need comprehensive penetration testing services, while Bright Security is a good choice for teams that want to build security into their development process. Both tools demonstrate the potential of AI to enhance the effectiveness and efficiency of penetration testing.

Where these tools fall short

Despite the advancements in AI-powered pentesting, several limitations and challenges remain. One significant issue is the prevalence of false positives – AI algorithms can sometimes identify vulnerabilities that don’t actually exist. This can waste valuable time and resources. Human oversight is still essential to validate findings and ensure accuracy. Testing complex systems, particularly those with intricate business logic, also presents a challenge for AI-powered tools.

AI algorithms can be tricked or bypassed by clever attackers. Adversarial machine learning, a field dedicated to developing techniques for evading AI systems, is a growing concern. The "black box" nature of some AI systems makes it difficult to understand why they identified a particular vulnerability, hindering the ability to effectively remediate it. Ethical considerations are also important; using AI for offensive security raises questions about responsible disclosure and potential harm.

Furthermore, the training data used to build AI models can be biased, leading to inaccurate or unfair results. Ensuring the diversity and quality of training data is crucial for building reliable and trustworthy AI-powered pentesting tools. The current reliance on pattern recognition also limits the ability of AI to discover truly novel vulnerabilities that don’t resemble anything it has seen before.

No comments yet. Be the first to share your thoughts!