The shift toward AI in pentesting

Pentesting is a standard part of security, but it's usually slow and expensive. Most companies struggle to find enough experts to run these tests manually, leaving gaps in their defenses.

Now, artificial intelligence (AI) is changing the game. It's not about replacing human pentesters, but about equipping them with powerful tools to amplify their abilities. AI-powered pentesting isn’t a futuristic concept; it’s a rapidly evolving reality. The increasing sophistication of cyber threats – from ransomware to supply chain attacks – demands a more proactive and efficient approach to security.

In 2026, we're seeing a significant shift towards integrating AI into every stage of the pentesting lifecycle. From automated vulnerability discovery to intelligent exploit generation, AI is becoming an indispensable asset for security teams. This isn’t simply about automating tasks; it’s about gaining a deeper understanding of potential attack vectors and improving the overall effectiveness of security assessments.

Automation is not the same as AI

It’s easy to conflate automated pentesting with AI-driven pentesting, but they are distinct approaches. Automated pentesting typically involves using tools to scan for known vulnerabilities and misconfigurations. These tools, while valuable, largely rely on pre-defined rules and signatures. Think of it as a systematic checklist – effective for identifying common issues, but limited in its ability to uncover novel attacks.

AI-driven pentesting goes further. It uses machine learning algorithms to analyze code, network traffic, and system behavior to identify anomalies and predict potential exploits. This allows it to discover vulnerabilities that traditional tools might miss. As HackerSec.com points out in their March 23, 2026 article, “Automated Pentesting vs. AI Pentest”, the key difference lies in the ability to learn and adapt.

However, purely automated tools—even those with some AI components—have limitations. They can generate a high number of false positives, requiring significant manual effort to triage. They also struggle with complex logic and custom applications where pre-defined rules don’t apply. A fully automated system might flag a harmless function as a vulnerability, wasting valuable time. The real power comes from combining AI with human expertise.

- Automated Pentesting: Relies on pre-defined rules and signatures.

- AI-Driven Pentesting: Uses machine learning to analyze and predict vulnerabilities.

Techniques driving modern testing

Several AI techniques are driving innovation in pentesting. Machine learning (ML) is perhaps the most prominent, particularly in vulnerability detection. ML algorithms can be trained on vast datasets of code and attack patterns to identify anomalies that might indicate a security flaw. Pattern recognition allows AI to spot subtle indicators of compromise that a human might overlook.

Natural Language Processing (NLP) is also playing a growing role. NLP enables AI to analyze code comments, documentation, and even security reports to understand the context of potential vulnerabilities. This is particularly useful for identifying vulnerabilities in complex software systems where understanding the code’s intent is crucial. It can also help to prioritize remediation efforts based on the severity of the identified issues.

Reinforcement learning takes things a step further by allowing AI to learn through trial and error. This is used for automated exploit development, where the AI attempts to exploit vulnerabilities to gain access to a system. Generative AI is now being used to create realistic attack scenarios, helping security teams to test their defenses against a wider range of threats. This can include crafting convincing phishing emails or simulating sophisticated malware attacks.

These techniques aren’t isolated. They often work in concert. For example, NLP might identify a potential vulnerability in code, and reinforcement learning could then be used to develop an exploit for that vulnerability.

Tools to watch in 2026

The market is growing fast. StackHawk handles runtime and API security with automated discovery. Bright Security is an application platform that uses AI to rank and check findings.

Detectify is another notable player, offering automated web application security scanning with a focus on identifying vulnerabilities that are likely to be exploited. Cobalt.io provides a platform for connecting organizations with pentesters and utilizes AI to streamline the pentesting process and improve the quality of reports. Probely focuses on API security, offering automated testing and vulnerability detection.

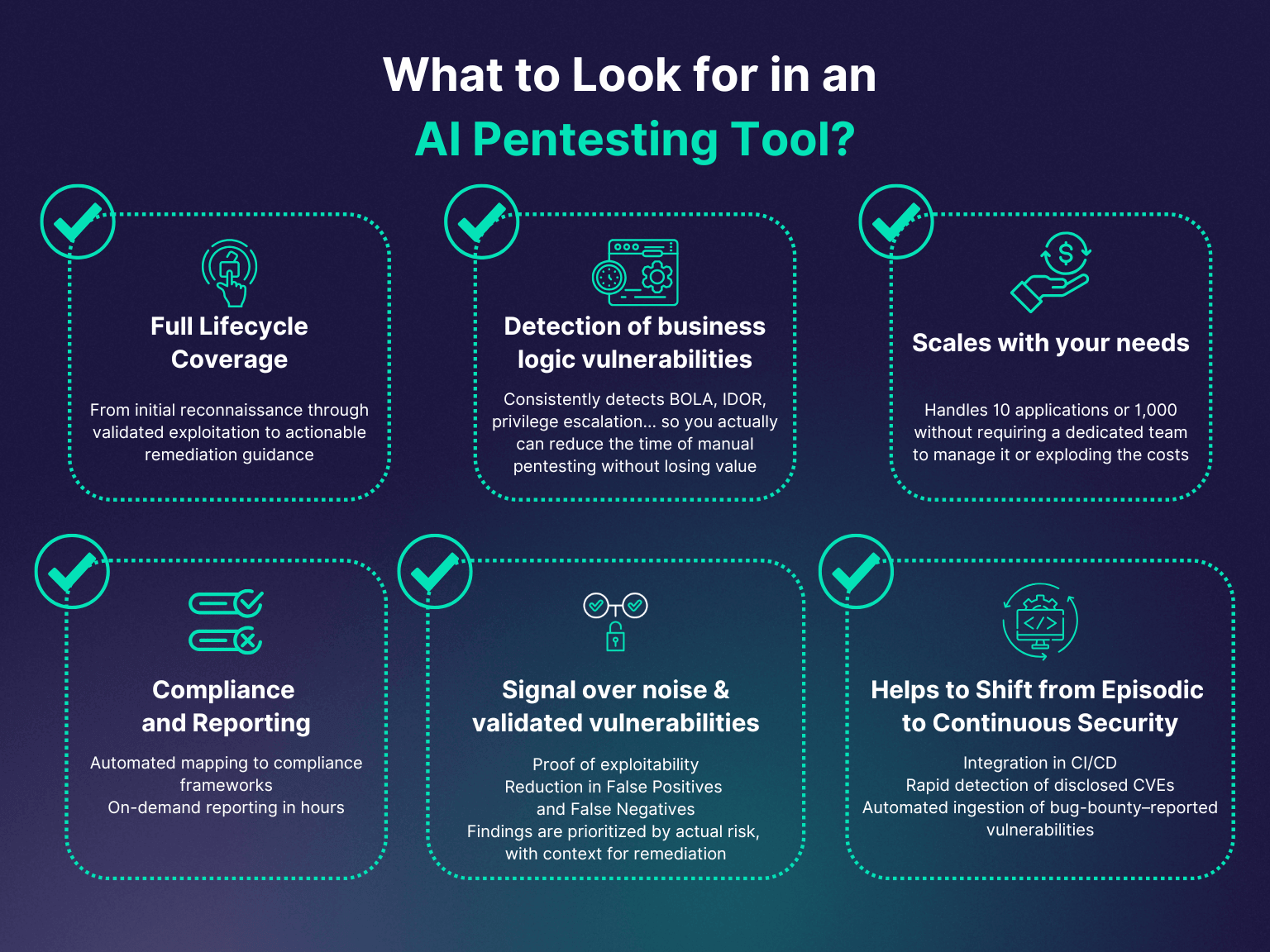

It’s important to note that the capabilities of these tools vary. Some specialize in web application security, while others focus on network or cloud environments. The right tool for a given organization will depend on its specific needs and infrastructure. It's also essential to carefully evaluate the claims made by vendors and to conduct thorough testing to ensure that the tool meets your requirements.

Choosing the right tool depends on your specific needs. Consider the size and complexity of your applications, the types of vulnerabilities you’re most concerned about, and your team’s existing skillset. Don’t rely solely on marketing materials; request demos and trials to get a firsthand look at the tool’s capabilities.

- StackHawk: Automates discovery for runtime and API security.

- Bright Security: Intelligent application security platform.

- Detectify: Automated web application security scanning.

- Cobalt.io: Platform for connecting with pentesters and streamlining pentesting.

- Probely: API security testing and vulnerability detection.

AI-Powered Penetration Testing Tool Comparison (2026)

| Tool Name | Target Application Type | AI Technique(s) | Automation Level | Integration Capabilities |

|---|---|---|---|---|

| Arthur AI | Web Applications | Machine Learning, Natural Language Processing | Assisted | CI/CD pipelines, Slack, Jira |

| Detectify | Web Applications, APIs | Machine Learning | Assisted | Jira, Slack, Microsoft Teams, PagerDuty |

| Probely | Web Applications, Cloud | Machine Learning | Assisted | Slack, Microsoft Teams, Jira, PagerDuty, API |

| Bright Security | Web Applications | Machine Learning | Assisted | CI/CD pipelines, Jira, Slack |

| StackHawk | Web Applications, APIs | Machine Learning | Assisted | CI/CD pipelines, Jira, Slack |

| Pentera | Network, Cloud | Reinforcement Learning | Fully Automated | SIEM integrations, ticketing systems |

| AttackIQ | Network, Cloud | Machine Learning | Assisted | SIEM, SOAR, ticketing systems |

| HackerSec AI Pentest | Web Applications | Machine Learning, Natural Language Processing | Assisted | Ticketing Systems |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Fitting AI into the workflow

Successfully integrating AI-powered pentesting tools requires a thoughtful approach. It's crucial to remember that these tools are assistants, not replacements for human pentesters. The most effective strategy is to combine AI’s automation capabilities with human expertise and critical thinking.

A typical workflow might involve using an AI tool to scan for vulnerabilities, then having a human pentester triage the findings and validate the results. This helps to reduce false positives and ensure that the most critical vulnerabilities are addressed first. AI can also be used to prioritize testing efforts, focusing on areas of the system that are most likely to be targeted by attackers.

AI results are often biased because the training data is biased. This means the software might miss or mislabel specific flaws. I've found that the only way to catch these misses is to keep a human in the loop to validate the output.

Think of AI as a force multiplier. It can automate tedious tasks, identify potential vulnerabilities, and provide valuable insights, but it still requires human oversight and judgment to ensure accurate and effective security assessments.

Skills you'll need now

The rise of AI in pentesting doesn't diminish the importance of human skills; it changes them. Pentesters in 2026 need to be more than just exploit developers. They need to understand the underlying AI/ML concepts that power these tools, enabling them to interpret results effectively and identify potential biases.

Data analysis and interpretation are also crucial. Pentesters must be able to sift through large amounts of data generated by AI tools, identify patterns, and draw meaningful conclusions. Critical thinking and problem-solving skills remain essential for complex scenarios where AI may struggle. Adaptability and continuous learning are paramount, as the field of AI is constantly evolving.

Equally important are ethical considerations. AI-powered pentesting raises questions about responsible disclosure, data privacy, and the potential for misuse. Pentesters must adhere to a strong ethical code and use these tools responsibly. Human expertise remains essential for nuanced analysis, complex scenarios, and for understanding the broader security implications of identified vulnerabilities.

- AI/ML Understanding: Grasping the concepts behind AI-powered tools.

- Data Analysis: Interpreting results and identifying patterns.

- Critical Thinking: Solving complex security problems.

- Adaptability: Keeping up with evolving AI technologies.

- Ethics: Managing responsible disclosure and privacy when using automated tools.

No comments yet. Be the first to share your thoughts!