The quantum threat

For decades, the security of our digital world has rested on the difficulty of certain mathematical problems. Algorithms like RSA and ECC, which protect everything from online banking to government communications, rely on the fact that factoring large numbers and solving elliptic curve discrete logarithm problems are computationally intensive tasks for traditional computers. Quantum computing changes this math.

Quantum computers, leveraging the principles of quantum mechanics, have the potential to solve these problems much faster. Specifically, Shor’s algorithm, developed in 1994, provides a polynomial-time solution for factoring integers – effectively breaking RSA encryption. A similar quantum algorithm exists to compromise ECC. This isn’t a hypothetical threat; it’s a demonstrable capability, even with the relatively primitive quantum computers we have today.

The concern isn't just about future possibilities. Data encrypted today could be stored and decrypted later when sufficiently powerful quantum computers become available. This is sometimes referred to as a "store now, decrypt later" attack. This is why the National Institute of Standards and Technology (NIST) initiated a process to develop and standardize quantum-resistant encryption algorithms several years ago.

The year 2026 is becoming a critical focal point for preparation. NIST has accelerated its standardization efforts, aiming to have a suite of algorithms ready for deployment well before quantum computers pose an immediate, widespread threat. While a fully functional, cryptographically relevant quantum computer isn’t here yet, the transition to quantum-resistant cryptography is a complex undertaking that requires significant planning and implementation – hence the urgency.

NIST standards for 2024

In 2024, NIST announced the first set of finalized post-quantum cryptography (PQC) standards. These standards are the first real tools we have to secure digital infrastructure against quantum attacks. The initial set includes CRYSTALS-Kyber for key-establishment and CRYSTALS-Dilithium for digital signatures.

CRYSTALS-Kyber is a lattice-based key encapsulation mechanism (KEM). It’s designed to securely exchange cryptographic keys over a public channel. The security of Kyber relies on the hardness of solving the Learning With Errors (LWE) problem, a mathematical problem believed to be resistant to attacks from both classical and quantum computers. It’s relatively efficient and has a small key size, making it suitable for a wide range of applications.

CRYSTALS-Dilithium, also lattice-based, is a digital signature algorithm. It allows for the creation of digital signatures that can be used to verify the authenticity and integrity of digital documents. Like Kyber, its security rests on the LWE problem. Dilithium offers a good balance between signature size, verification speed, and security.

These algorithms aren't unbreakable. Cryptography is an arms race, and new attacks will appear. However, these algorithms represent the best available defense against quantum computers based on current knowledge. NIST is continuing to evaluate other candidate algorithms for future standardization, including Falcon, SPHINCS+, and others, providing further options and redundancy.

- CRYSTALS-Kyber handles key exchange.

- CRYSTALS-Dilithium manages digital signatures.

- Falcon: Alternative digital signature algorithm

- SPHINCS+: Stateless hash-based signature scheme

NIST Post-Quantum Cryptography Standards Comparison

| Algorithm | Key Size (approx.) | Signature/Ciphertext Size (approx.) | Computational Cost | Security Level |

|---|---|---|---|---|

| Kyber (Key-Encapsulation Mechanism) | 768 - 1024 bytes | 768 - 1024 bytes | Low | NIST Security Level 1-5 |

| Dilithium (Digital Signature) | 2528 - 4832 bytes | 2048 - 4832 bytes | Medium | NIST Security Level 1-5 |

| Falcon (Digital Signature) | 897 - 1793 bytes | 690 - 1384 bytes | Medium | NIST Security Level 1-3 |

| SPHINCS+ (Digital Signature) | 2048 - 8192 bytes | 7056 - 16384 bytes | High | NIST Security Level 1-5 |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

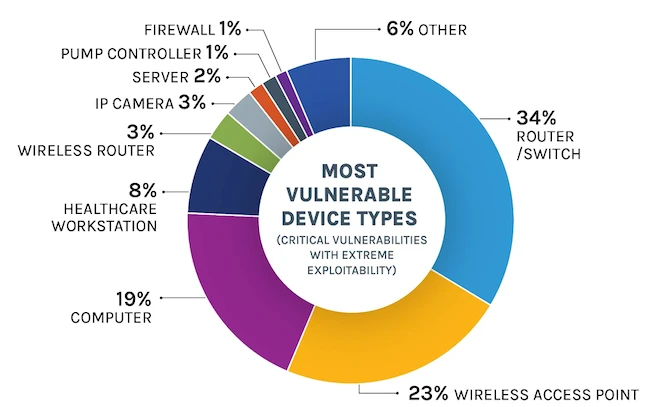

Finding network vulnerabilities

The first step in preparing for the post-quantum era is to understand where your organization is vulnerable. A thorough assessment of your network infrastructure is essential. Begin by identifying systems and applications that rely heavily on RSA and ECC. This includes areas like Transport Layer Security/Secure Sockets Layer (TLS/SSL) certificates, Virtual Private Networks (VPNs), Secure Shell (SSH) connections, and digital signatures used for code signing or document verification.

A crucial concept here is crypto-agility. This refers to the ability to quickly and efficiently switch between different cryptographic algorithms. Systems designed with crypto-agility in mind will be far easier to update when PQC algorithms become widely adopted. If your systems are tightly coupled to specific algorithms, the migration process will be significantly more complex and costly.

A comprehensive asset inventory is paramount. You need to know what hardware and software you have, where it’s located, and what cryptographic algorithms it uses. What data is most sensitive and requires the strongest protection? What legacy systems are still running older, vulnerable algorithms? This inventory is the foundation for your migration.

Consider the lifespan of your data. Data with long-term value – intellectual property, financial records, sensitive customer information – is at the highest risk. This data needs to be protected with quantum-resistant encryption sooner rather than later. Conversely, data with a short lifespan may not require immediate migration.

A phased migration

A "rip and replace’ approach to migrating to PQC is simply unrealistic for most organizations. It"s too disruptive, too expensive, and too time-consuming. A phased rollout is the most practical strategy. This involves a series of steps, starting with preparation and ending with full PQC deployment.

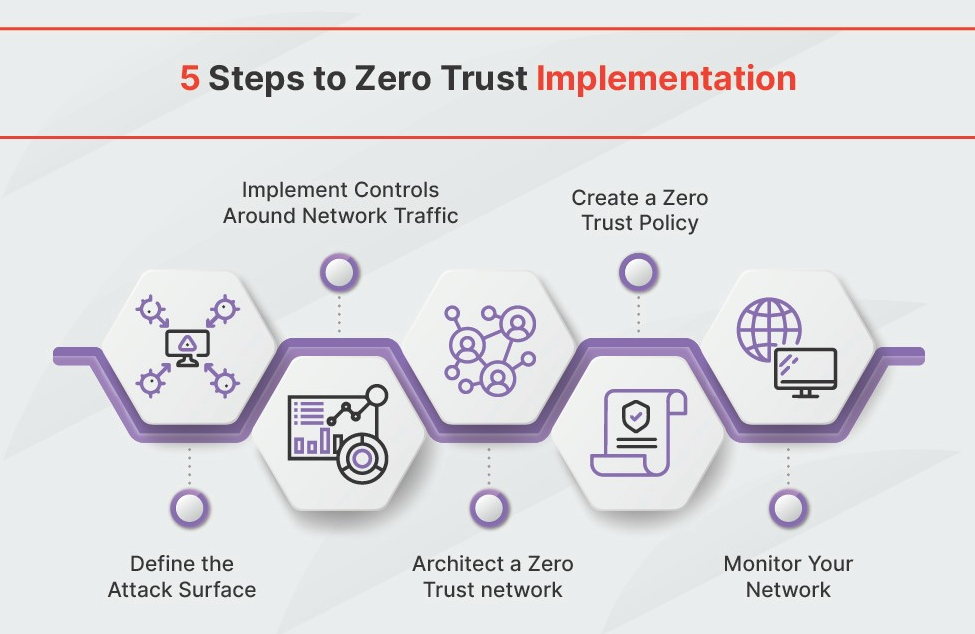

Phase 1: Discovery and Inventory. This is the step we just discussed – identifying vulnerable systems and data. Document everything. Understand your dependencies. This phase also includes researching PQC libraries and tools, and testing them in a lab environment. Don’t underestimate the time required for this initial assessment.

Phase 2: Hybrid Implementation. This involves running both classical (RSA, ECC) and PQC algorithms in parallel. This provides a fallback mechanism if issues arise with the PQC algorithms. Hybrid implementations can be complex, as they often require modifications to existing protocols and software. Increased computational overhead is a common challenge, as both sets of algorithms need to be executed.

Phase 3: Full PQC Deployment. Once you’ve thoroughly tested the hybrid implementation and are confident in the stability and performance of the PQC algorithms, you can begin to phase out the classical algorithms. This should be done gradually, monitoring performance and security closely. It’s crucial to have a rollback plan in case of unforeseen issues.

Updating software libraries and Hardware Security Modules (HSMs) is a critical part of the migration process. Many existing libraries and HSMs do not yet support PQC algorithms. You may need to upgrade to newer versions or replace older hardware. Thorough testing and validation are essential to ensure that the updates don’t introduce new vulnerabilities.

PQC and Existing Security Protocols

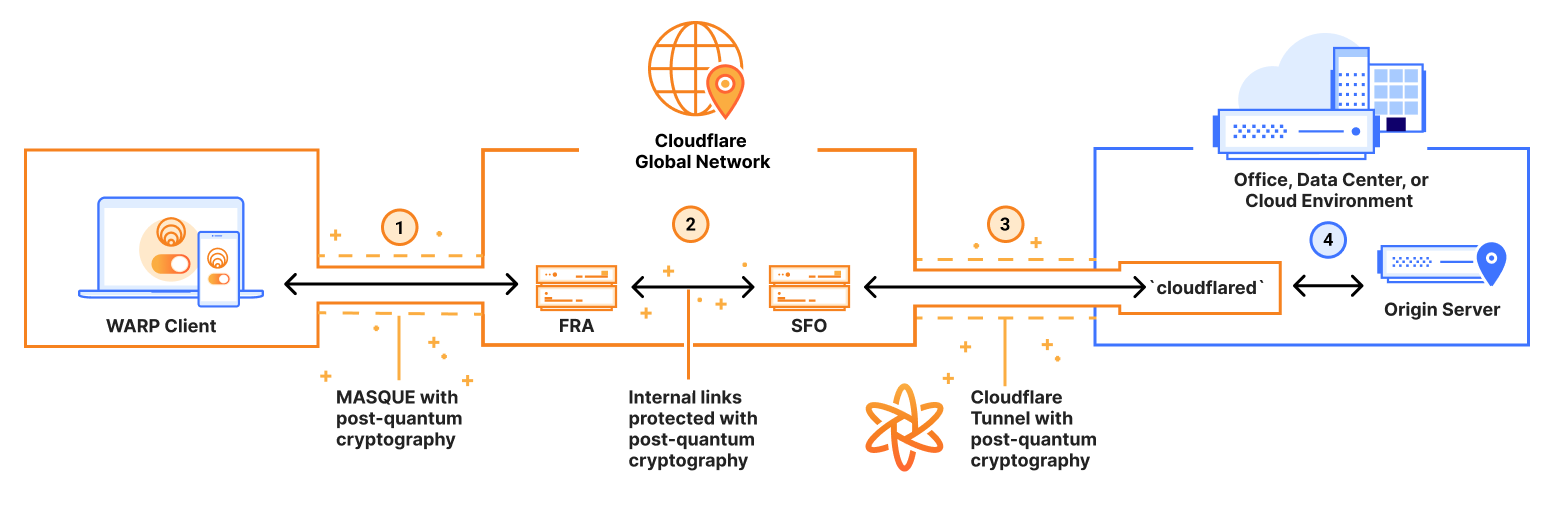

Integrating PQC algorithms into existing security protocols like TLS 1.3, SSH, and IPsec is a complex but necessary process. Fortunately, significant work is underway to standardize PQC-enabled versions of these protocols. The Internet Engineering Task Force (IETF) is playing a leading role in this effort.

Key exchange mechanisms are particularly important. In TLS 1.3, for example, the key exchange is typically handled by algorithms like ECDHE. This needs to be replaced with a PQC-based key exchange algorithm, such as Kyber. The IETF is defining new cipher suites that incorporate PQC key exchange and digital signature algorithms.

Performance is a key consideration. PQC algorithms are generally more computationally intensive than classical algorithms, which can impact performance. Optimization efforts are ongoing to minimize this overhead. The impact will vary depending on the specific algorithm and the hardware it’s running on.

Compatibility issues are also a concern. Older systems may not support the new PQC-enabled protocols. Careful planning and testing are required to ensure interoperability between different systems. This is where crypto-agility is so important, enabling a smoother transition.

The Role of HSMs and Crypto Modules

Hardware Security Modules (HSMs) are critical for secure key storage and cryptographic operations. They provide a tamper-resistant environment for protecting sensitive cryptographic keys. As organizations transition to PQC, their HSMs will need to be updated to support the new algorithms.

Retrofitting existing HSMs to support PQC can be challenging. Many HSMs have limited processing power and memory, which may not be sufficient to handle the more demanding PQC algorithms. In some cases, it may be necessary to deploy new PQC-capable HSMs.

Organizations that rely on HSMs should work closely with their vendors to understand their PQC roadmap. Ensure that the HSMs you deploy meet FIPS 140-2 validation requirements for PQC implementations. This ensures that the HSMs have been rigorously tested and meet industry standards for security.

The cost of upgrading or replacing HSMs can be significant. It’s important to factor this cost into your overall PQC migration budget. Consider a phased approach, starting with the most critical systems and gradually upgrading the remaining HSMs over time.

Quantum-safe networking

While PQC focuses primarily on upgrading encryption algorithms, a truly quantum-safe network requires a broader approach. This includes considering other quantum-safe networking technologies and implementing robust monitoring and threat intelligence capabilities.

Quantum Key Distribution (QKD) is one such technology. It uses the principles of quantum mechanics to securely distribute cryptographic keys. However, QKD has limitations. It’s expensive, has limited range, and is vulnerable to certain attacks. While QKD may be suitable for specific, high-security applications, it’s not a practical solution for most organizations.

Continuous monitoring and threat intelligence are essential. The quantum computing landscape is evolving rapidly, and new threats are constantly emerging. Organizations need to stay informed about the latest developments and adapt their security measures accordingly. This includes monitoring for new attacks, vulnerabilities, and algorithm weaknesses.

Ultimately, preparing for the post-quantum era is an ongoing process, not a one-time event. The threat landscape will continue to evolve, and organizations need to be proactive in their efforts to maintain a secure network. Don’t view PQC as a "set it and forget it" solution.

What is your organization's biggest challenge in preparing for post-quantum cryptography?

Vote below to highlight the main obstacle your organization faces as it plans for quantum-resistant encryption in 2026.

No comments yet. Be the first to share your thoughts!